Researchers at Tilde Research released Auroraa new optimizer for training neural networks that addresses the structural defect in the widely used Muon optimizer. The bug quietly kills a large portion of MLP neurons during training and keeps them permanently dead. Aurora It comes with the 1.1B parameter pre-training experience, a new and advanced result for the modified nanoGPT hotboot standard, and open code.

What is mon?

To understand the aurora, it helps to first understand the muon. The Muon optimizer caught the attention of the ML community after outperforming AdamW At the time of the wall clock for convergence In the nanoGPT speedrun competition – a community benchmark that measures how fast you can train a GPT-style model to lose cross-validation. Since then, muon has been adopted for training parametric models by many research groups.

The main algorithmic step of Mion is computation Polar factor From the gradient matrix. for the gradient matrix g With thin singular value decomposition (SVD) G = UΣVᵀMoon calculates polar(G) = UVᵀwhich is the closest semi-orthogonal matrix to g In Frobenius’s rule. This orthogonal gradient is then used to update the weights: w ← w − η UVᵀ For the learning rate η. Only the use of matmul-based iterative algorithms to calculate the polarity factor is what makes Muon widely practical.

NorMuon puzzle: Grade normalization helps, but why?

Before Aurora, NorMuon led the nanoGPT speed race. I introduced a grade normalization step-similar to Adam’s scaling for each parameter-that normalized the polarity factor with the inverse RMS standard. While this often pulls the update away from a completely orthogonal gradient, NorMuon still achieves impressive results. The Tilda team set out to understand exactly what gap NorMuon was addressing in Muon’s formulation.

Fundamental problem: natural row anisotropy and neuronal death in long arrays

The research team discovered that the Muon enhancer inadvertently “kills” a significant portion of neurons Tall weight matrices,such as those in SwiGLU-based MLP layers. Since it is mathematically impossible for these specific matrix shapes to remain perfectly orthogonal while maintaining row updates evenly, the optimizer ends up giving massive updates to some neurons while effectively ignoring other cells. This results in a “death spiral” in which poorly performing neurons receive fewer signals over time and eventually become permanently inactive.

The research study revealed that by the 500th training step, more than one in four neurons are actively dead. This is not just a local issue. The lack of activity in these neurons deprives subsequent layers of necessary data, spreading inefficiency throughout the model. Aurora It solves this problem using a new mathematical approach that forces uniform updates across all neurons without sacrificing the benefits of orthogonality.

Before arriving at Aurora, the search offers an intermediate solution called Yu Normon. The key observation is that NorMuon normalizes each row to a unit norm (norm = 1), but this is actually the wrong target for a long array. For a long orthogonal matrix, the mathematically correct row mean is √(n/m), not 1. U-NorMuon corrects this by normalizing the rows of the long matrix to get the norm √(n/m) instead of 1.

In 340M experiments, U-NorMuon outperforms both Muon and standard NorMuon and completely eliminates the phenomenon of neuron death – degrees of leverage become nearly isotropic throughout training. More importantly, U-NorMuon spreads this feature to layers it does not directly touch: keeping the upper gate/gate rows alive ensures isotropic gradient flow in the lower projection, stabilizing its column lever without any direct interference.

However, U-NorMuon still faces a problem: it aggressively overrides the polar operator with uniform row parameters, sacrificing the accuracy of the polar operator, which is theoretically undesirable and experimentally expensive in the Muon framework (research shows that Muon achieves monotonically lower loss with more precise orthogonality). This is the motivation for Aurora.

Aurora Borealis: The most intense descent under two common restrictions

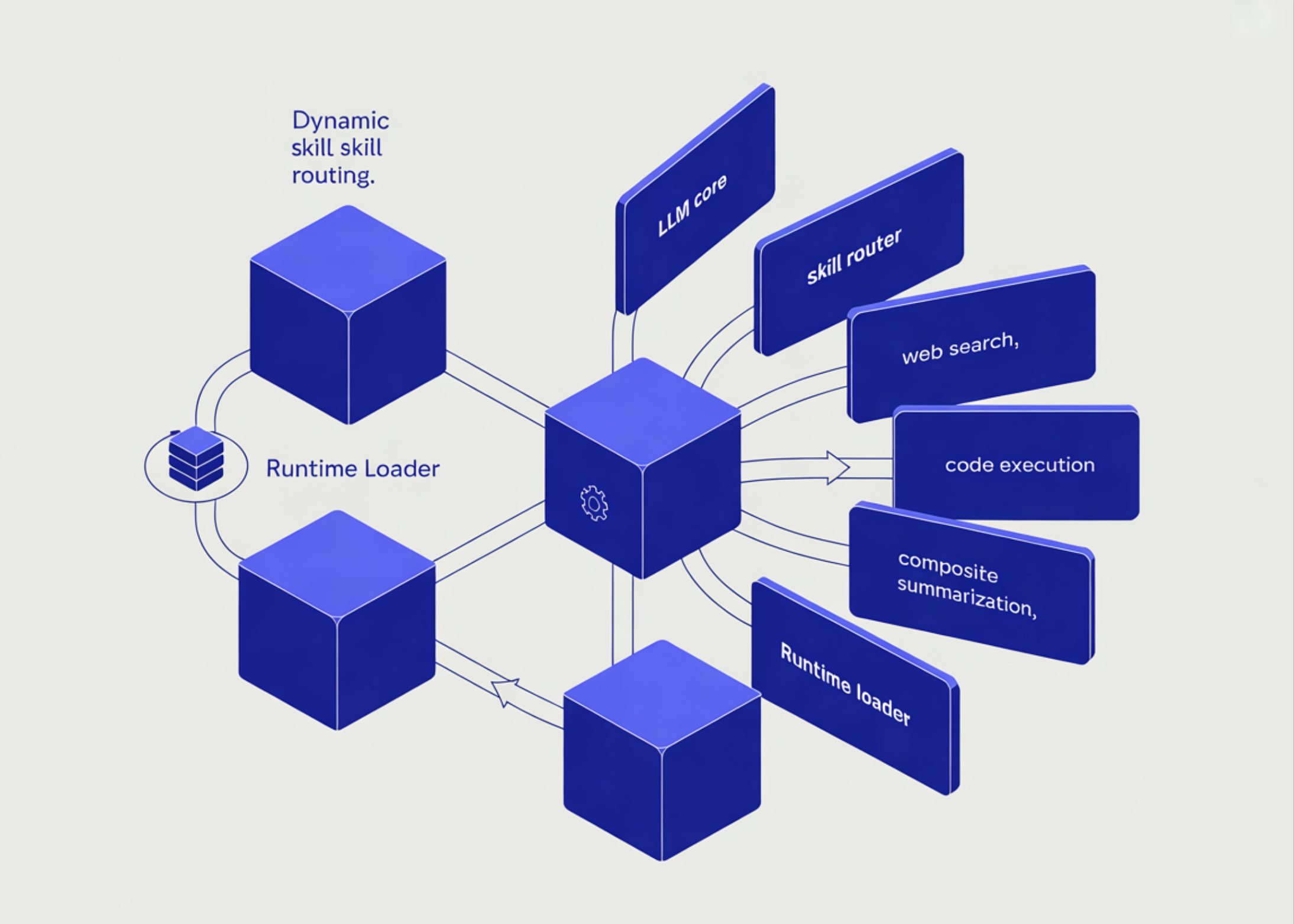

Aurora reframes the problem of choosing an update from the ground up. Instead of running orthogonality and then correcting it using row normalization, Aurora asks: What is the optimal update under subscriber Left semiorthogonality constraints and uniform class criteria?

Formally, for long arrays, Aurora solves:

The paper shows that these two constraints together force all singular values of U to be exactly equal to 1. This means that the joint constraint still produces a valid left quasi-orthogonal update, not a compromised update. This is the main idea that separates Aurora from NorMuon and U-NorMuon: they achieve standardization of row and orthogonality simultaneously rather than trading one for the other.

The research also provides two algorithmic implementations of the Aurora solution. the Remanian Aurora It uses a gradient projection method limited to the Stiefel/equal-row leverage manifold. the Vanilla Aurora It is simpler and more practical to implement. Both are open source. For non-long (wide and square) arrays, standard row uniformity implies orthogonality, so Aurora leaves those parameters unchanged.

results

Aurora was used to train a 1.1B model that achieves 100x data efficiency on open source internet data and outperforms larger models in public evaluations such as HellaSwag. On the 1B scale, Aurora gains significantly over both Muon and NorMuon. In the modified nanoGPT optimization hotspot, Aurora’s performance outperforms the previous advanced performance (which was NorMuon). Untuned Aurora carries only 6% of the computational load compared to traditional Muon, and is designed as an easy-to-use alternative.

The research team also found that Aurora’s performance increases with MLP width, suggesting that it is particularly effective for networks with large MLP expansion factors-which is consistent with the neuronal death hypothesis, since wider MLPs have longer arrays and a greater opportunity to take advantage of the compound’s anisotropy.

Key takeaways

- The muon polar factor update inherits the normalized row dissimilarity on long matrices, causing more than 25% of MLP neurons to die permanently as early as step 500 of training.

- Aurora solves this problem by finding the optimal update under the combined constraints of left semi-orthogonality and uniform row parameters – achieving both simultaneously rather than trading one for the other.

- At a scale of 1.1 billion, Aurora achieves 100x data efficiency on open source Internet data, outperforms larger models in HellaSwag, and puts a new SoTA on the fast modified nanoGPT operating system.

- Aurora is a close alternative to Muon with only 6% computational overhead, and its gains scale with MLP exposure.

verify paper and GitHub repo Also, feel free to follow us on twitter And don’t forget to join us 150k+ mil SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.

Do you need to partner with us to promote your GitHub Repo page, face hug page, product release, webinar, etc.? Contact us