A team of researchers from China has released AntAngelMed, a large, open-source medical language model that the team describes as the largest and most capable of its kind currently available.

What is AntAngelMed?

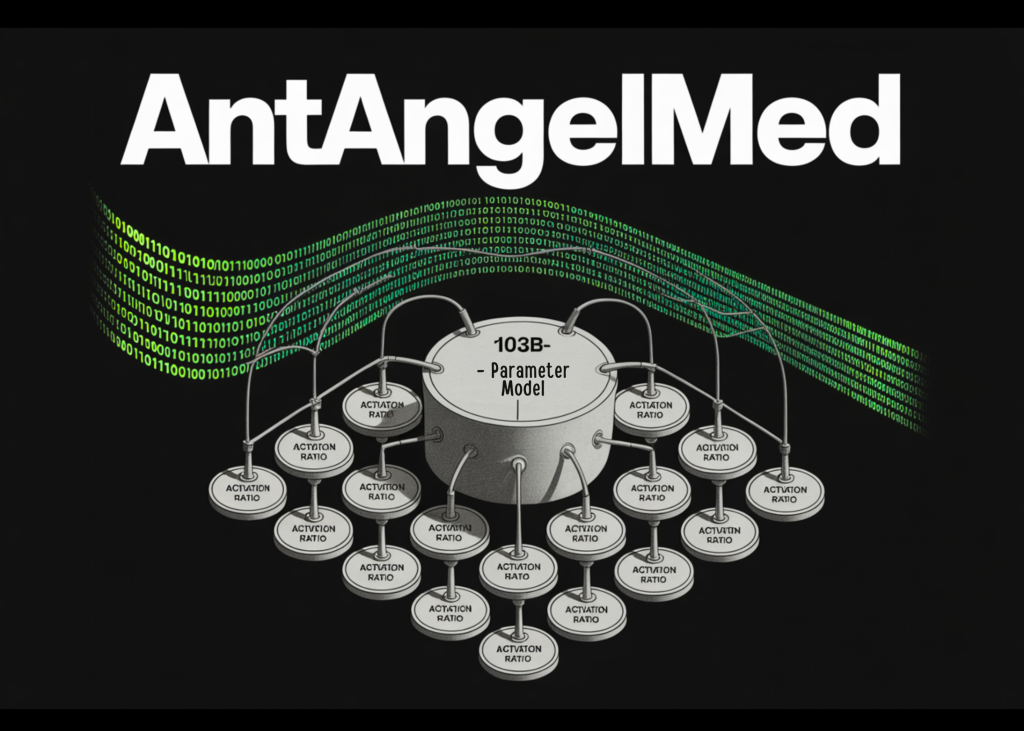

AntAngelMed is a language model for the medical domain that has 103 billion total parameters, but it doesn’t activate all of those parameters during inference. Instead, it uses a mixture of experts (MoE) architecture with an activation ratio of 1/32, which means that only 6.1 billion parameters are active at any given time when processing a query.

It is useful to know how the Ministry of Education structures work. In the standard dense model, every parameter is involved in processing each token. In the MOE model, the network is divided into many “expert” subnetworks, and the routing mechanism selects only a small subset of them to handle each input. This allows you to have a very large total number of parameters – which is usually associated with strong knowledge power – while keeping the actual computing cost of inference proportional to the smaller number of active parameters.

AntAngelMed inherits this design from Ling-flash-2.0, a basic model developed by includeAI and guided by what the team calls Ling Scaling Laws. Specific improvements on top include: improved expert detail, fine-tuned joint expert ratio, attention balancing mechanisms, auxiliary lossless sigmoid routing, MTP (Multi-Token Prediction) layer, QK-Norm, and Partial-RoPE (rotary position embedding is applied to a subset of attention vertices instead of all of them). According to the research team, together these design choices allow small MoE activation models to deliver up to 7× efficiency compared to dense architectures of similar size, meaning that with only 6.1 billion activation parameters, AntAngelMed can match the performance of an approximately 40 billion dense model. Separately, as the output length grows during inference, the relative speed advantage can also reach 7× or more compared to dense models of similar size.

Training pipeline

AntAngelMed uses a The training process has three stages It is designed to put a general understanding of language above deep adaptation to the medical field.

the The first stage It is ongoing pre-training on wide-ranging medical collections, including encyclopedias, web texts, and academic publications. This phase is built on the Ling-flash-2.0 checkpoint, giving the model a strong foundation for general reasoning before medical specialization begins.

the The second stage It is supervised fine-tuning (SFT), where the model is trained on a multi-source instruction dataset. This dataset combines general reasoning tasks-mathematics, programming, and logic-to maintain train-of-thought capabilities, along with medical scenarios such as doctor-patient QandA, diagnostic reasoning, and safety and ethics situations.

the The third stage It is reinforcement learning using the GRPO (Group Proportional Policy Optimization) algorithm, combined with task-specific reward models. GRPO, originally presented in the DeepSeekMath paper, is a variation of PPO that estimates baselines from group scores rather than a separate critical model, making it computationally lighter. Here, reward cues are designed to model behavior toward empathy, structured clinical responses, safety boundaries, and evidence-based reasoning-all with the goal of reducing hallucinations in medical questions.

Perform inference

On H20 devices, AntAngelMed exceeds 200 tokens per second, which the research team reports is about 3 times faster than a 36 billion parameter-intensive model. Extrapolating from YaRN (another RoPE extension), it supports a context length of 128 KB – long enough to handle full clinical documents, extended patient histories, or multi-turn clinical dialogues.

The research team also released a quantitative version of the FP8 model. When this quantization is combined with the EAGLE3 speculative decoder improvement, the inference throughput at concurrency 32 improves significantly compared to FP8 alone: 71% on HumanEval, 45% on GSM8K, and 94% on Math-500. These benchmarks measure mathematical coding and reasoning tasks-not clinical tasks directly-but serve as proxies for the stability of the model’s overall throughput across output types.

Standard results

In HealthBench, OpenAI’s open source medical evaluation benchmark that uses multi-turn simulated medical dialogues to measure real-world clinical performance, AntAngelMed ranks… Firstly Among all the open source models it outperforms some of the best proprietary models as well, with a particularly large advantage in the HealthBench-Hard subset.

In MedAIBench, an evaluation system run by the National AI Medical Industry Pilot Facility of China, AntAngelMed ranks first, with particularly strong scores in the Medical Knowledge QandA and Medical Ethics and Safety categories.

In MedBench, a Chinese healthcare management MSc benchmark covering 36 independently curated datasets and nearly 700,000 samples across five dimensions – answering medical knowledge questions, understanding medical language, generating medical language, complex medical reasoning, and safety and ethics – AntAngelMed ranks first overall.

Visual explanation of Marktechpost

Key takeaways

- AntAngelMed is an open source 103B parameter medical LLM that activates only 6.1B parameters at inference time using a 1/32 activation ratio MoE architecture inherited from Ling-flash-2.0.

- It uses a three-stage training pipeline: continuous pre-training on clinical materials, SFT with mixed general instruction and clinical data, and GRPO-based reinforcement learning for safety and diagnostic inference.

- On H20 devices, the model exceeds 200 tokens/sec and supports a context length of 128K via YaRN extrapolation – about 3x faster than the similarly dense 36B model.

- AntAngelMed ranks first among open source models in OpenAI’s HealthBench, outperforms many proprietary models, and tops both the MedAIBench and MedBench leaderboards.

- The model is available on Hugging Face, ModelScope, and GitHub; The model weights are Apache 2.0, the code is MIT, and a quantum version FP8 has also been released.

verify Typical weights at high frequency, GitHub repo and Technical details. Also, feel free to follow us on twitter And don’t forget to join us 150k+ mil SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.

Do you need to partner with us to promote your GitHub Repo page, face hug page, product release, webinar, etc.? Contact us