Large linguistic models are remarkably capable, but frustratingly vague. When a model misbehaves – such as generating responses in the wrong language, repeating itself endlessly, or rejecting safe requests – AI developers have very few tools to diagnose the problem Why This happened at the internal account level. This is the problem that Qwen-Scope is designed to solve.

Qwen Team just released Coin domain,an open source collection of Sparse autoencoders (SAEs) They were trained on the Qwen3 and Qwen3.5 model families. The version includes 14 sets of SAE weights across 7 different models – Five dense models (Qwen3-1.7B, Qwen3-8B, Qwen3.5-2B, Qwen3.5-9B, and Qwen3.5-27B) and two Mixed-of-Experts (MoE) models (Qwen3-30B-A3B and Qwen3.5-35B-A3B).

What is a sparse autoencoder, and why should you care about it?

Think of a sparse autoencoder as a translation layer between raw neural network activations and concepts that a human can understand. When LLM software processes text, it produces high-dimensional hidden states-vectors with thousands of numbers-that are difficult to interpret directly. SAE learns how to parse these activations into a large dictionary of Miscellaneous latent featureswhere each input activates only a small subset of features. Each of these features tends to correspond to a specific, interpretable concept: language, style, and safety behavior.

Concretely, for each base layer and transformer layer, Qwen-Scope trains a separate SAE to reconstruct residual current activations using a sparse set of latent features. The SAE encoder maps each activation to a latent hyperrepresentation, and Top-k activation rule Only keeps the largest your Latent activations for the reconstruction (with k set to 50 or 100 in version). For dense spines, the SAE width scales to 16× the hidden size of the model; For MOE backbones, standard SAEs use a width of 32 KB (16 x expansion), and wider SAEs are also released up to a width of 128 KB (expand x 64) to capture a more fine-grained representation structure.

The result is a dictionary of layer features for each transformer layer across all seven columns. One important technical detail: Qwen3.5-27B is the only backbone on which SAEs are trained guidance alternative; All other six spines are used a base Typical checkpoints

Four ways Qwen-Scope is changing development workflow

1. Guide the time of inference

The most immediate application is directing – Influencing the model output without modifying any model weights. The idea is based on a well-supported hypothesis: high-level behaviors are encoded as directives in the model’s internal representation space. By adding or subtracting the feature direction from the remaining stream at inference time using the formula h' ← h + αdwhere h is the hidden state, d is the direction of the SAE feature, and α With the power of control, engineers can push the model toward or away from certain behaviors.

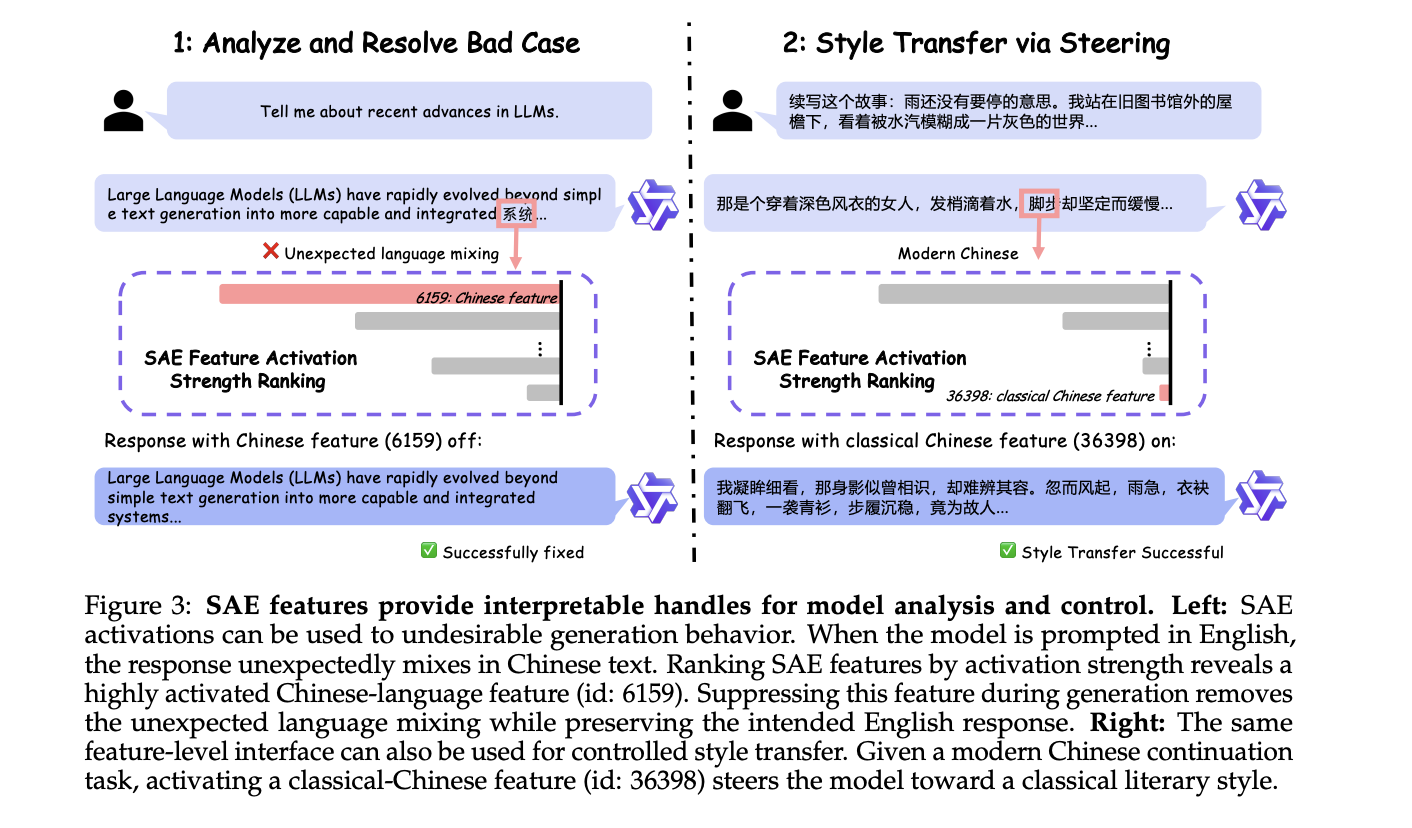

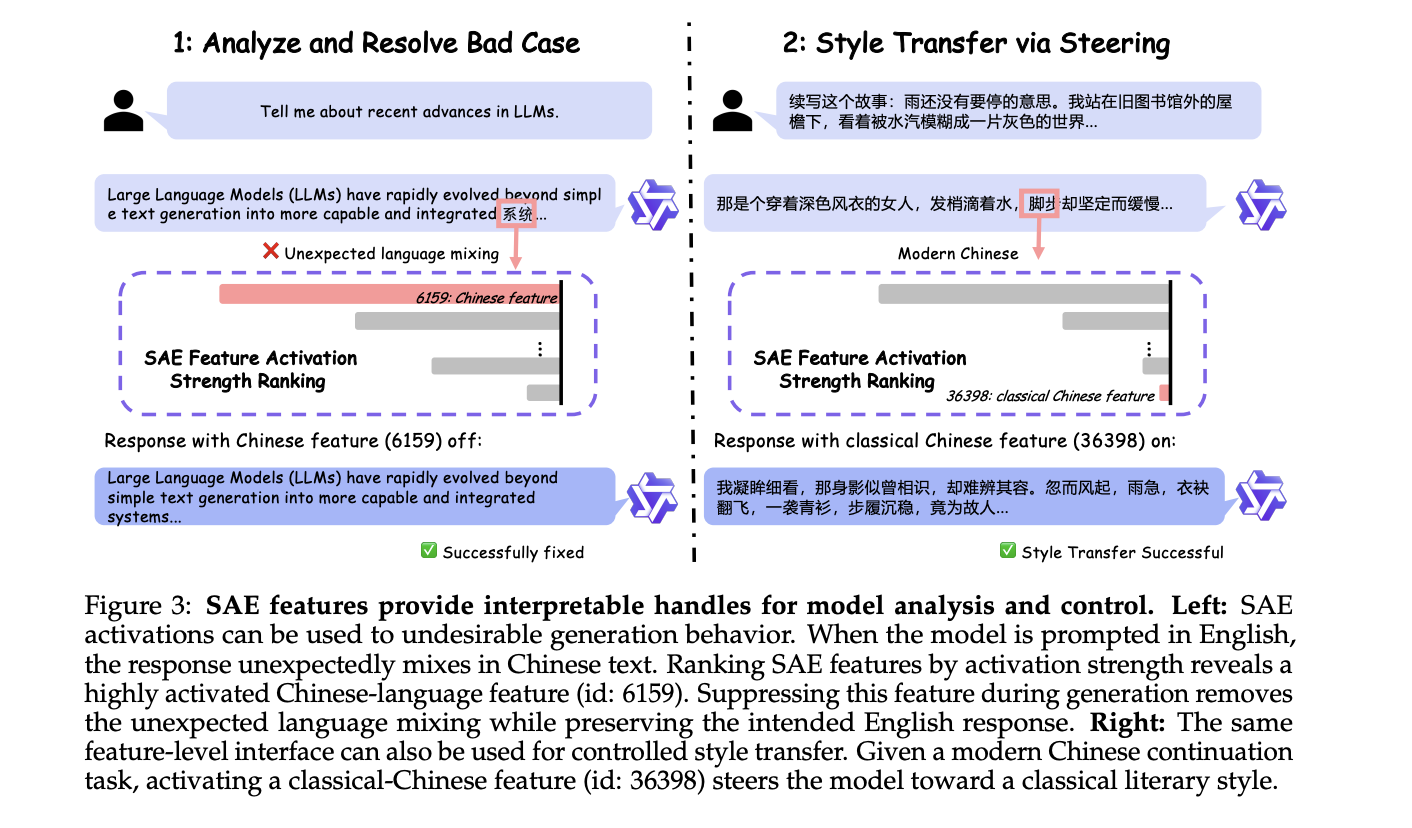

The research team presents two case studies on Qwen3 models. In the first case, the required English form unexpectedly mixes with the Chinese text. Classification of SAE features by strength of activation reveals a highly active Chinese language feature (ID: 6159). Suppressing it during construction completely removes language confusion. In the second case, activating the Classical Chinese feature (ID: 36398) successfully directs the task of continuing the story toward the classical literary style. Both examples require zero-weight updates.

2. Evaluation analysis without running models

Evaluating LLMs usually means running many forward passes through large standard datasets – which is expensive in terms of computation and time. Qwen-Scope suggests a cheaper alternative: using SAE feature activations as a tool Agent-level representation for criteria analysis.

The basic idea is that when a model processes a reference sample, SAE decomposes its activation into a sparse set of active features, each of which can be interpreted as a “micropower.” A criterion whose samples all activate the same features is frequent; There are two criteria that activate feature sets that largely overlap similar. The research team identified A Metric redundancy feature It achieves a Spearman rank correlation ρ ≈ 0.85 with performance-based repeatability across 17 widely used benchmarks-including MMLU, GSM8K, MATH, EvalPlus, and GPQA-Diamond-without a single model evaluation. The analysis also reveals that 63% of GSM8K features are already covered by MATH, indicating that evaluation suites containing MATH can safely delete GSM8K with minimal loss of discriminative information.

The framework also extends to similarity between criteria: the research team measures the overlap between pairs of criteria to determine whether they explore the same capabilities. After controlling for general model ability by dichotomizing the MMLU scores, the partial Pearson correlation between feature overlap and performance-based similarity across the 28 benchmark pairs improved to 75.5%, providing evidence that feature overlap captures benchmark-specific ability similarity rather than just general model quality. This has a direct practical implication: standards with low mutual advantages overlap with distinct capabilities and should be retained; Standards with high overlap are candidates for standardization.

3. Data-driven workflow: toxicity classification and safety data synthesis

SAE features have also proven effective as lightweight classifiers. The research team built a multilingual toxicity classifier across 13 languages using a simple two-stage pipeline: identify SAE features that are triggered more frequently on toxic examples than on clean examples (in a small detection set), and then apply an OR rule to those features in the annotated test data – no additional classifier header, no gradient-based fitting. In English, this achieves an F1 score above 0.90 in both Qwen3-1.7B and Qwen3-8B. The research team also shows that features discovered in English transfer meaningfully to other languages without being rediscovered – performance declines with linguistic distance (strongest for European languages such as Russian and French, weakest for Arabic, Chinese and Amharic), and expansion to Qwen3-8B improves the level and stability of cross-linguistic transfer. Importantly, using only 10% of the original detection data still recovers about 99% classification performance, demonstrating strong data efficiency.

On the synthesis side, the research team A Feature-based safety data collection pipeline: Identify safety-relevant SAE features that are missing from current supervision, create quick-to-completion pairs designed to activate these features, and verify feature space retention. Given a matching budget, feature-based synthesis achieves 99.74% coverage of the target safety feature set, compared to the significantly lower coverage achieved by natural sampling or random safety-related synthesis. Adding synthetic 4K feature-based examples to the real 4K safety examples produces a safety accuracy of 77.75 – approaching the performance of training on the 120,000 safety-only examples.

4. Post-training: Supervising fine-tuning and consolidating learning

Perhaps the most recent contribution from a technical standpoint is the use of SAE features as during signals an exerciseAnd not just a conclusion.

For fine-tuning under supervision, the research team addresses The code switches unexpectedly – Where multilingual LLM students spontaneously produce tokens in an unintended language. Their method is called Supervised and autoencoder-driven fine-tuning (SASFT)first identifies language-specific features by their monolingual score, and then introduces an additional regularization loss that prevents these features from being activated during training on data other than the target language. Across five models including three model families-Gemma-2, Llama-3.1, and Qwen3-and three target languages (Chinese, Russian, and Korean), SASFT achieved a >50% reduction in code-switching in the majority of experimental settings, with complete elimination in certain configurations (e.g., Qwen3-1.7B in Korean), while maintaining performance in six multilingual benchmarks.

To enhance learning, the research team addresses Endless repetition – A low-frequency but destructive failure mode where patterns are repeated in repetitive content. Standard online RL rarely experiences frequent subtractions, so it cannot recognize a strong corrective signal. Qwen-Scope addresses this by using SAE feature routing to generate a single frequency-biased subtraction for each training set, which is then combined as a rare negative sample in the DAPO RL pipeline. The result: repeatability drops sharply and consistently across Qwen3-1.7B, Qwen3-8B, and Qwen3-30B-A3B, while overall benchmark performance remains competitive with vanilla RL.

verify paper weights, and Technical details. Also, feel free to follow us on twitter Don’t forget to join us 130k+ ml SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.

Do you need to partner with us to promote your GitHub Repo page, face hug page, product release, webinar, etc.? Contact us