Good morning {{first_name| Artificial intelligence lovers}}. After OpenAI’s DALL-E and GPT Image 1 paved the early way in image generation, Google’s Nano Banana topped the leaderboards for the better part of the year. This run just ended.

OpenAI’s new ChatGPT Images 2.0 is the first image model to map, search the web, and self-verify its output before creating it, and the results are in – with an upgrade that Sam Altman says is like “going from GPT-3 to GPT-5 all at once.”

-

OpenAI breaks new ground with Images 2.0

-

Recording employee keystrokes for AI training

-

Build a command center with Claude Live Artifacts

-

Google pushes the deep search agent to the limit

-

4 new tools for AI, community workflow, and more

Obinay

Rundown: OpenAI only Rolled ChatGPT Images 2.0, the company’s upgraded image generation model that has gone viral in tests over the past few weeks – calling it the “smartest image generation model ever.”

-

2.0 thinks before creating images, allowing it to plan, search the web for information and references, and check its output for errors before delivery.

-

Model takes 1st place on Arena AI’s text-to-image leaderboard by a wide margin from Nano Banana 2, beating every generation category.

-

Other features include 2K resolution, output of up to 8 images at a time, aspect ratios ranging from 3:1 ultrawide to 1:3 tall, as well as multi-language text display.

-

Sam Altman described the release as “like going from GPT-3 to GPT-5 all at once,” with the model now available in ChatGPT, Codex, and API.

Why it matters: It’s been a long time since OAI has been at the forefront of the image world, and this release brings it back in a big way – with a model that not only seems to “solve” image and text problems like no other model has, but also completely changes workflows once again with thinking capabilities and capabilities that open up entirely new creative avenues.

Along with Algolia

Rundown: The next step in AI isn’t better chat; They are agents that can query databases, update systems, and make decisions. Does this mean more custom connectors? I’m not sure.

Whether you’re a developer or a data leader, the Algolia Guide helps you understand:

-

Challenges in building artificial intelligence agents

-

How MCP servers connect agents to search

-

Best practices and real cases

dead

Image source: Images 2.0 / Rundown

Rundown: Meta is Run The Model Capability Initiative (MCI) to record screenshots, keystrokes and mouse activity on employees’ work laptops in the US, without opting out, to capture real data for AI training, sparked backlash within the organization.

-

The scope of MCI capture is geared toward developers, recording activity in apps like VSCode, Metamate (Meta’s internal AI assistant), Google Chat, and Gmail.

-

Business Insider published the internal memo, where CTO Andrew Bosworth reportedly responded to concerns by saying there was “no option to opt out.”

-

About 8,000 Meta employees are scheduled to leave on May 20, with MCI starting to record their workflow a month before the end date.

-

The memo presented the move as a way for all Meta employees to help “the company’s models improve just by doing their daily work.”

Why it matters: Robotics labs have spent years recording humans performing physical tasks to teach their systems when and how to hold, walk, or stack boxes. Meta just brought this playbook to software and computer use, except the demo people are its own employees – and the backdrop of layoffs gives it a very dystopian feel.

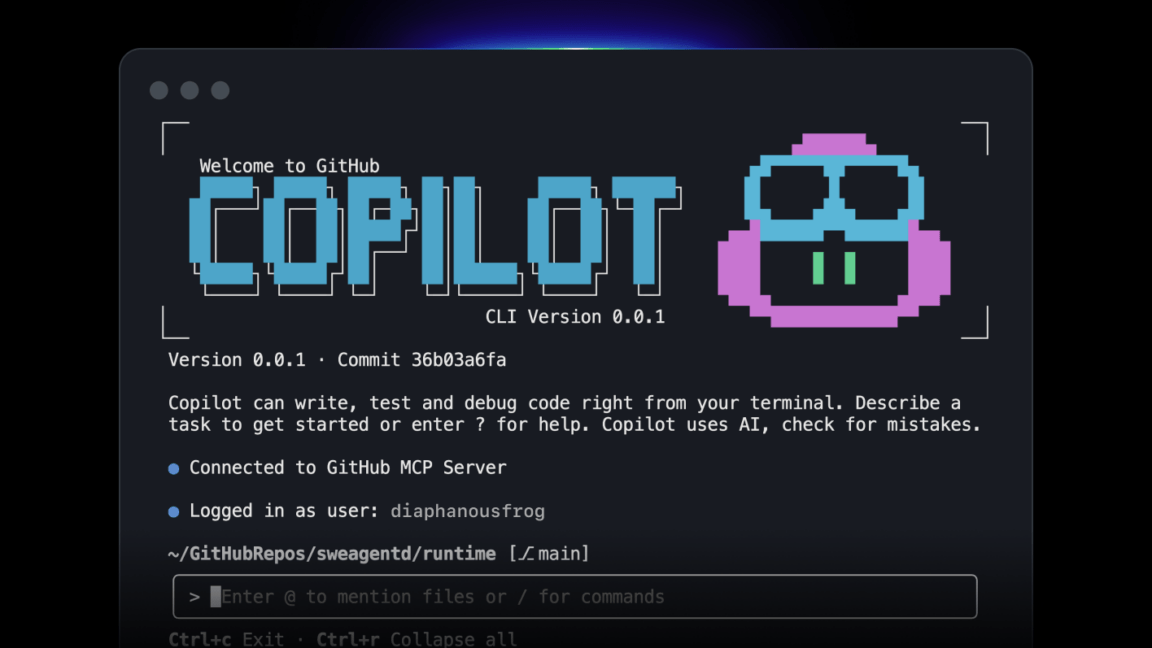

Artificial intelligence training

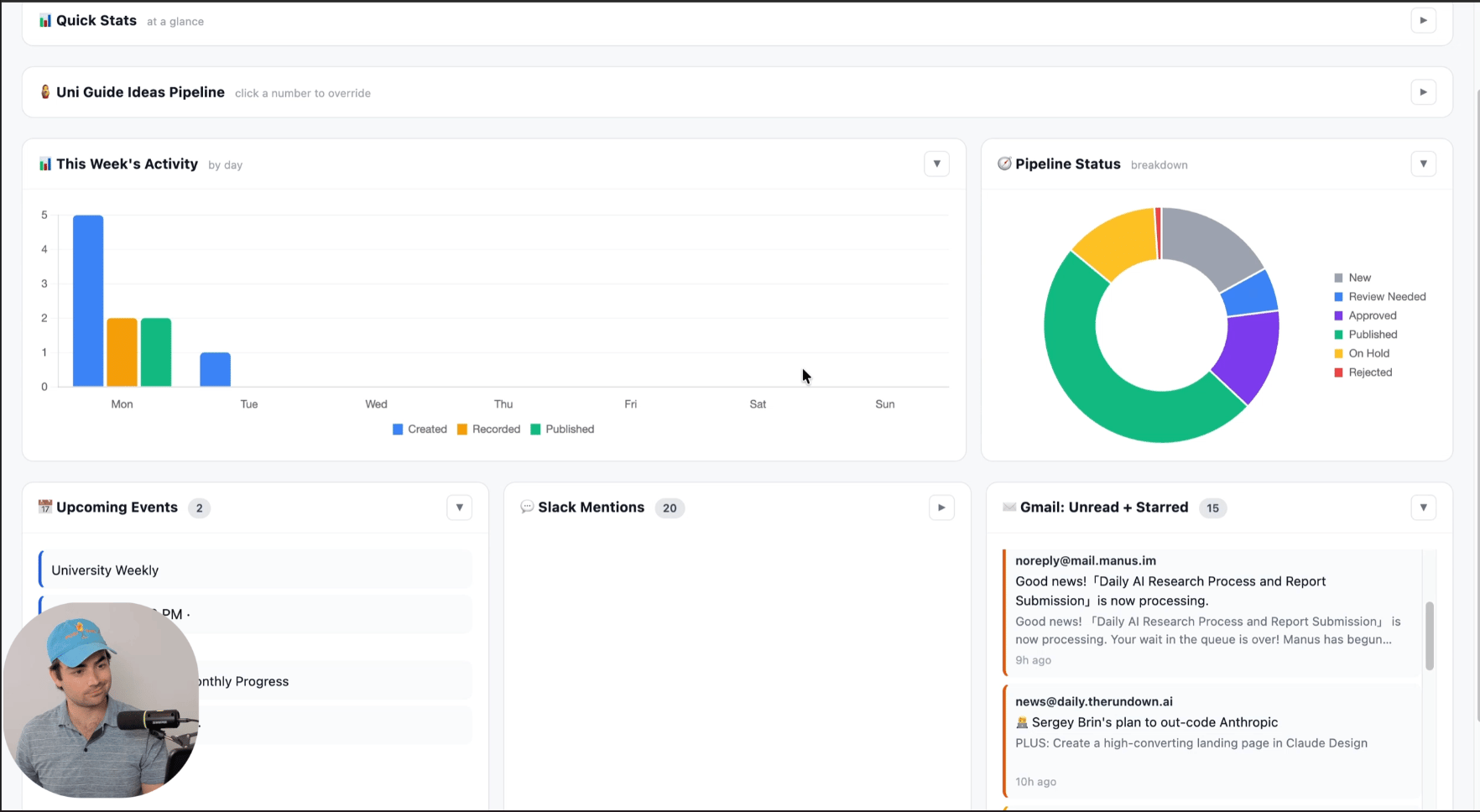

Rundown: In this guide you’ll learn how to create a daily command center in Claude Cowork using Live Artifacts. Instead of opening Slack, email, calendar, tasks, documents, and dashboards one by one, you’ll get one live view in one place.

-

Open Claude Cowork and message: “Interview me about my connected apps, daily workflow, KPIs, and what’s considered urgent. Then suggest modules for my daily command center before building the tool”

-

Answer the questions and then create a modular Live Artifact dashboard with Today, This Week, and This Month views, including KPI cards, stats, charts, and app feeds

-

Request to add priority and rating labels so that updates are categorized (Urgent, Review, FYI, Blocked) and sorted by impact, deadlines, and required decisions

-

Prompt you to add skills using custom buttons, like Plan My Day, Draft Responses, or Prepare for Meetings, so you can take action from the same dashboard

Pro Tip: Try additional upgrades like dark mode, animations, settings panel for update frequency, manual override, archive button, and tap to open any update.

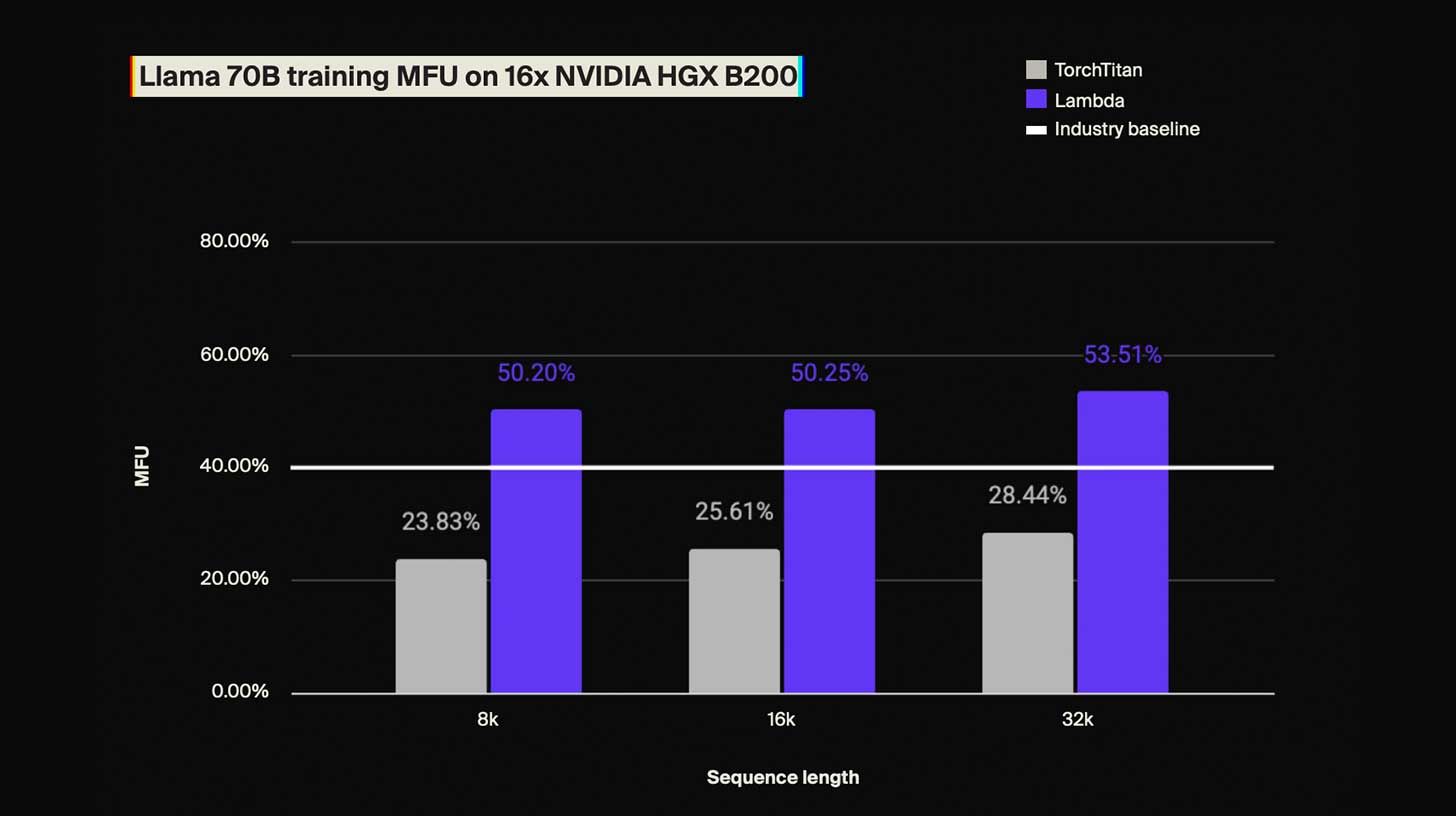

Introduction of Lambda

Rundown: Most large-scale AI training uses less than half the computing power you pay for. The Lambda team discovered the root causes and built a repeatable framework that boosted efficiency by more than 25%, without changing the model itself.

The Lambda report shows you how to process the following:

-

Memory inefficiency silently inflates your costs

-

Training configurations that don’t take full advantage of your equipment

-

Bottlenecks that slow down GPU communications

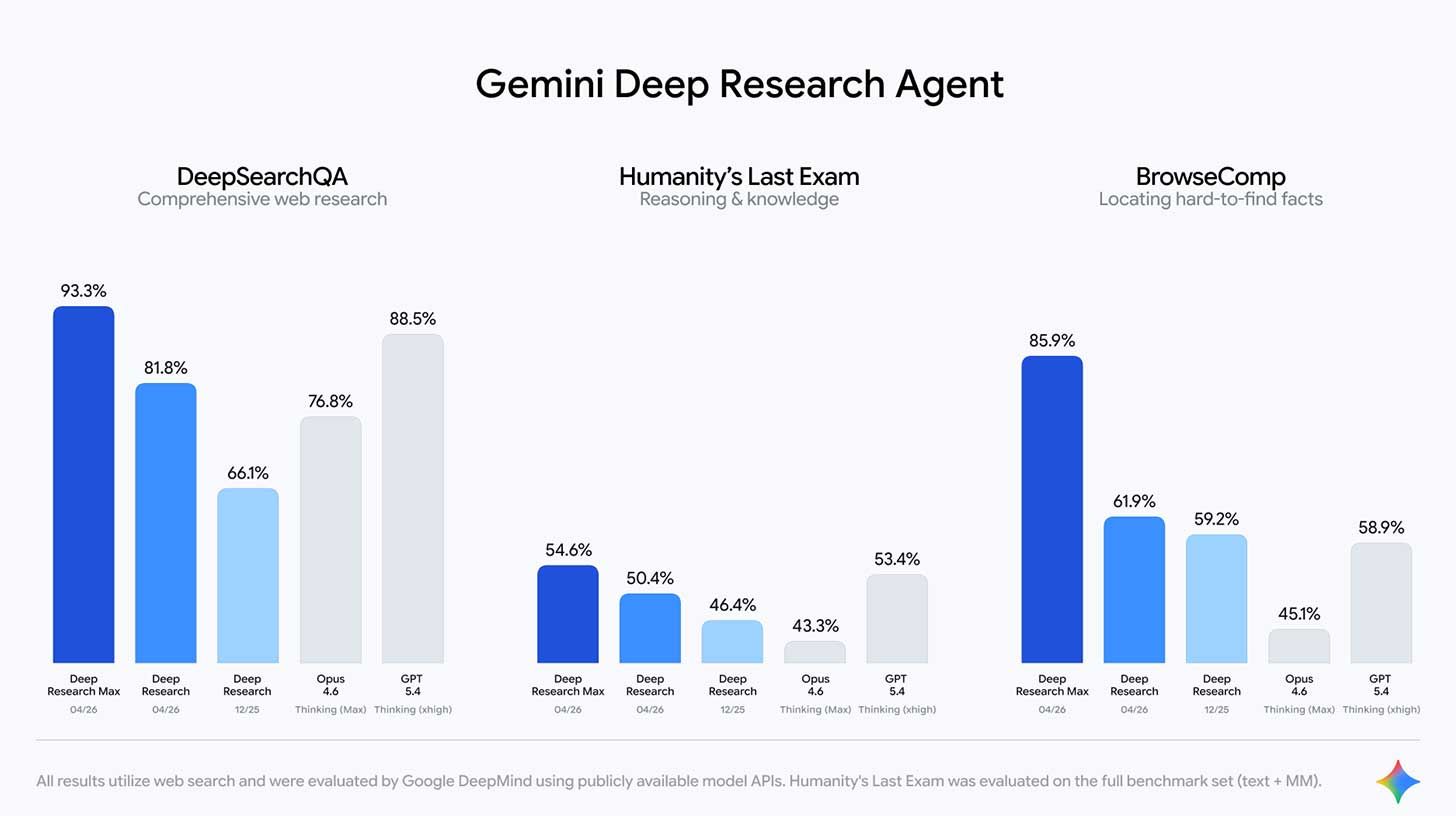

Rundown: Google Released Deep Research and Deep Research Max, two SOTA agents that use Gemini 3.1 Pro to generate research reports from the web, uploaded files, or any Model Context Protocol server, complete with charts and graphs.

-

Both clients use Gemini 3.1 Pro and run on the same search engine within NotebookLM, replacing Google’s December preview of Deep Research.

-

Google benchmarks show Max’s leaps in retrieval and inference from both previous versions and compared to models like Opus 4.6 and GPT 5.4.

-

Users can also combine open web search with MCP servers and upload files, or cut off external web access to search only their own data.

-

Google is already working with companies like PitchBook, SandP, and FactSet to build MCP servers that feed paid financial data directly into search workflows.

Why it matters: The extensive research work of analysts, consultants, and lawyers has been an obvious target for AI automation. Google’s move turns this threat into a priced API call that any developer can turn into a product. Expect more partnerships to follow as each sector determines which parts of its research workflow become automatable.

-

🔒 Stealth – Remove your personal data from the web so scammers and identity thieves can’t access it. Use the code He turned over To get a 55% discount.*

-

🔎 Deep Max – Exa’s new SOTA agent search tool

Former Vice President of OpenAI Research, Jerry Turek Fired Core Automation, a new AI lab building “AI for building AI” with founders from OpenAI, Anthropic, and DeepMind.

dead boiled Three other employees from Mira Moratti’s Thinking Machines Laboratory, Bringing the total number of founding members who have left the tech giant to 7.

Google Open source Its DESIGN.md feature From Stitch, a portable file that allows AI agents to understand a project’s colours, accessibility and brand rules.

Exa Released Deep Max, a new proxy search tool that outperforms current competitors in accuracy while running up to 20 times faster.

ginspark Fired Build, a new interactive coding tool powered by Claude Opus 4.7 that creates apps and websites from text prompts

Deezer I mentioned 75,000 AI-powered audio clips are now posted on its platform daily (44% of uploads), but attract only 1-3% of streams, with 85% of them classified as fraudulent.

In each newsletter we show how the reader is using AI to work smarter, save time or make life easier.

Today’s workflow comes from the reader Matthew S. In the United Kingdom:

“I used Claude to create my workout tracking app and exported the code to Bolt to create a web app. I have a specific set of exercises that I do each day that other trackers don’t plot or give me lines for. It lets me enter each set into each of the four sections and tells me when I’ve met my goal for the day.”

It only allows me to build my streak after I’ve completed all my workout goals and keeps a daily log of what I’ve achieved. Much easier!”

How do you use artificial intelligence? Tell us here.

That’s all for today!

Before you go, we’d love to know what you thought of today’s newsletter to help us improve The Rundown experience for you.

Rowan, Joey, Zack, Shobham and Jennifer – the humans behind The Rundown