Training leading AI models is not just a computational problem, but increasingly a networking problem. OpenAI has just introduced its own solution.

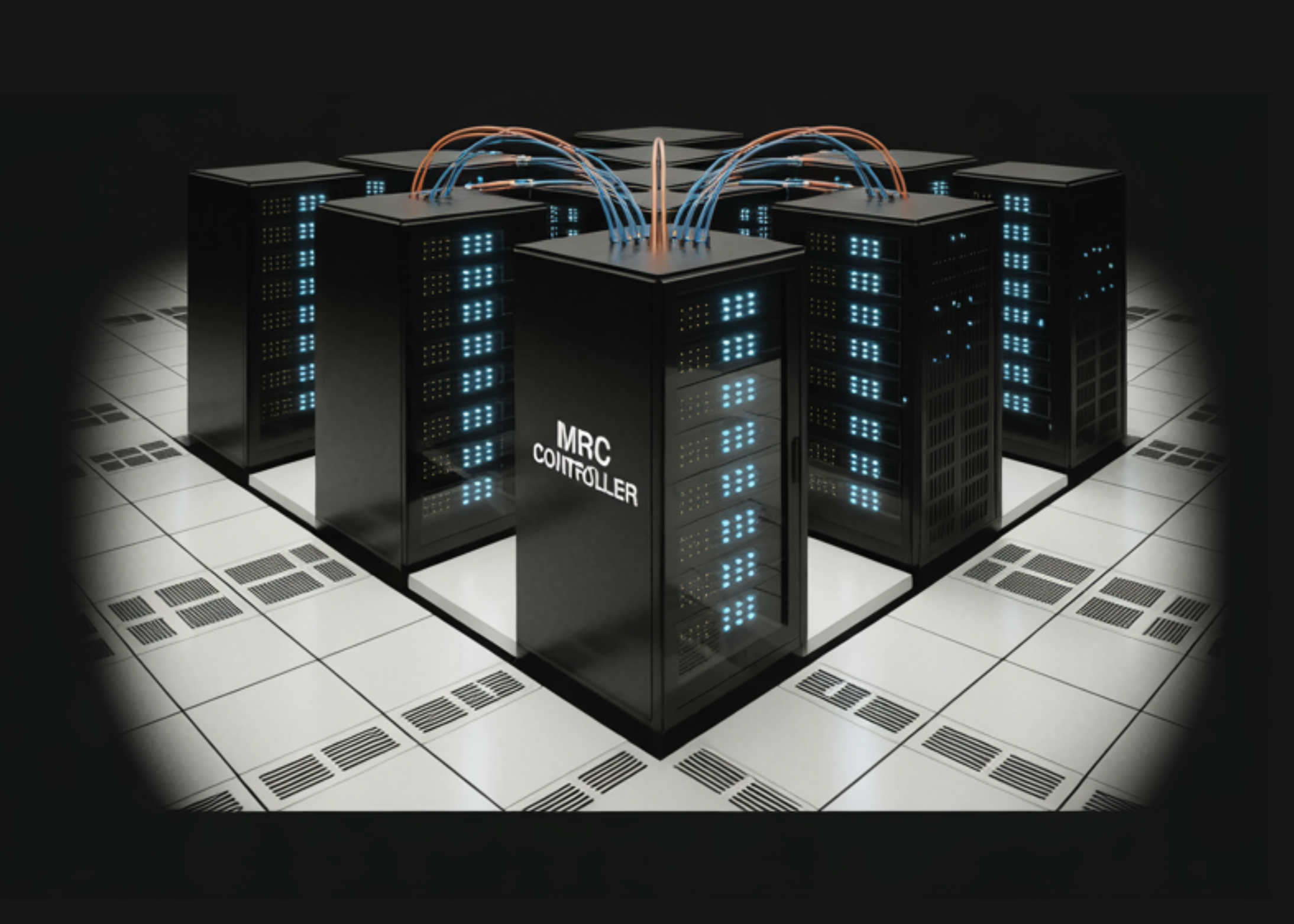

OpenAI announced the launch MRC (Multipath Reliable Communication)a new networking protocol developed over the past two years in partnership with AMD, Broadcom, Intel, Microsoft, and NVIDIA. The specification was published through the Open Computing Project (OCP), enabling the wider industry to use and build upon it.

Why are networks the hidden bottleneck in AI training?

To understand why MRC is important, you need to understand what happens inside the supercomputer during typical training. When training large AI models, a single step can involve several million data transfers. A single transfer that arrives late can spill over the entire task, which can cause the GPUs to remain idle.

Network congestion, linkages, and hardware failures are the most common sources of delay and instability in transfers – and these issues become more frequent and more difficult to resolve as the group size increases. This is the growing infrastructure challenge that OpenAI set out to solve.

According to OpenAI, more than 900 million people use ChatGPT every week. Maintaining and optimizing these models at this scale means that every second of GPU idle time represents a real cost and loss of capacity. OpenAI states that its goal is “to not only build a fast network, but also build a network that provides highly predictable performance, even in the presence of failures, to keep training jobs moving forward.”

What the MRC actually does: Three core mechanisms

MRC is not a fundamental invention. It extends RDMA over Converged Ethernet (RoCE) – an InfiniBand Trade Association (IBTA) standard that enables hardware-accelerated direct remote memory access between GPUs and CPUs. It builds on technologies developed by the Ultra Ethernet Consortium (UEC) and extends them with SRv6-based source routing to support large-scale AI network fabrics.

RoCE is a protocol that allows one device to read or write memory to another device directly over an Ethernet network, bypassing the central processing unit (CPU) for maximum throughput. SRv6 (Segment Routing over IPv6) takes this even further – the transmitter encodes the exact path the packet must follow directly within the packet header, so switches no longer need to run complex routing calculations. This reduces processing load on switches and saves power – an important factor at data center scale.

1. Spray adaptive packages to eliminate congestion

Instead of sending each transmission over a single network path, MRC distributes packets across hundreds of paths simultaneously, reducing congestion at the network core. With traditional RoCEv2, packets were stuck on a single path from point A to point B, contributing to congestion. To overcome this problem, MRC introduced intelligent packet spray load balancing, so that if a packet path is unusable, packets can pass through other paths on the network. This allows for greater bandwidth utilization, reduced latency, and fine-grained load balancing at the packet level.

2. Microsecond-level failure recovery via SRv6 static source routing

When network paths, links, or switches fail, MRC can detect and navigate around the problem on a microsecond time scale. Traditional mesh fabrics can take seconds or even tens of seconds to stabilize after failures. There is a key architectural decision that makes this possible: switches do not need to recalculate routes or do anything other than blindly follow the static routes with which they are configured. All routing information lives at the network interface card (NIC) level, not the switch level. This is a deliberately unconventional design – it disables dynamic routing in the switches entirely to prevent the two adaptive mechanisms from interfering with each other.

Before MRC, if the link between the GPU network interface and the layer 0 switch failed, the training task would fail. With MRC, the task proceeds with reasonable performance. If an 8-port network interface loses one port, the maximum rate will be reduced by an eighth. The MRC detects this, recalculates routes to avoid the failed level, and immediately tells peers not to use that level for incoming traffic. Most failed links recover within one minute, at which point the MRC returns the aircraft to use again.

3. Multi-level networks with fewer switching levels and lower cost

This is where MRC fundamentally changes the group structure. Instead of treating each network interface as a single 800 Gb/s link, it is split into several smaller links. For example, one interface can connect to eight different switches. A switch that can connect 64 ports at 800 Gb/s can connect 512 ports at 100 Gb/s instead. This allows a network to be created that fully connects approximately 131,000 GPUs with only two levels of switches. A traditional 800 Gb/s network requires three or four levels.

The savings are also doubled: the research team estimates that for full bandwidth, a two-level multilevel design is required 2/3 the number of optics and 3/5 the number of switches Compared to a three-level network. Fewer layers of switches also means lower latency – the longest path passes only three switches instead of five or seven – and a smaller burst radius when any individual component fails.

Hardware: What NICs and adapters run MRC

According to the research paper, MRC is already running in production on specific named devices. It is implemented across 400 and 800 Gb/s RDMA NICs – including NVIDIA ConnectX-8, AMD Pollara, AMD Vulcano and Broadcom Thor Ultra – with SRv6 adapter support on NVIDIA Spectrum-4 and Spectrum-5 (running Cumulus and SONiC) and Broadcom Tomahawk 5 via Arista EOS. On the protocol side, AMD contributed the NSCC congestion control algorithm, which is now part of the UEC congestion control specification, along with IB/RDMA transport semantic layer extensions that allow MRC to integrate with existing RDMA programming models while adding multipath capabilities that set it apart from traditional transport.

Already in production: From Stargate to Fairwater

The MRC is not just a prototype. It has already been deployed across all of OpenAI’s larger NVIDIA GB200 supercomputers used to train leading models, including the Oracle Cloud Infrastructure (OCI)-powered site in Abilene, Texas, and on Microsoft’s Fairwater supercomputers. MRC was used to train several OpenAI models, taking advantage of hardware from NVIDIA and Broadcom. Microsoft’s Fairwater supercomputers are located in Atlanta and Wisconsin.

MRC has been specifically used to train large frontier language models ChatGPT and Codex. While training the state-of-the-art parametric model, OpenAI had to rerun four first-level transformers. With MRC, the company did not need to coordinate the restart process with the teams managing the group’s training functions.

Key takeaways

- OpenAI offers MRC – OpenAI has partnered with AMD, Broadcom, Intel, Microsoft, and NVIDIA to release MRC (Multipath Reliable Communication) through the Open Computing Project (OCP).

- Spraying the pack kills congestion – MRC distributes packets across hundreds of paths simultaneously, eliminating core congestion and reducing latency during large-scale GPU training.

- Microsecond failure recovery – MRC detects link and switching failures and redirects traffic in milliseconds, keeping training jobs alive through failures that would previously have killed the entire job.

- Two-tier topology for over 131,000 GPUs – By dividing 800 Gb/s interfaces into eight 100 Gb/s planes, MRC supports supercomputers with more than 100,000 GPUs using only two levels of switches instead of three or four.

- It is already used for ChatGPT and Codex – MRC has already been deployed across OpenAI’s largest NVIDIA GB200 supercomputers and has been used to train large frontier language models for ChatGPT and Codex.

verify paper and Technical details. Also, feel free to follow us on twitter Don’t forget to join us 150k+ mil SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.

Do you need to partner with us to promote your GitHub Repo page, face hug page, product release, webinar, etc.? Contact us