Most developers treat the prompt as an afterthought – write something sensible, note the output, and repeat if necessary. This approach works until reliability becomes paramount. As LLMs move into production systems, the difference between demanding usually It works and one works constantly It becomes an engineering concern. In response, the research community has formalized catalysis into a set of well-defined techniques, each designed to address specific failure modes-whether in architecture, logic, or style. These methods work entirely at the claim layer, and do not require any fine-tuning, model changes, or infrastructure upgrades.

This article focuses on five such techniques: Role-specific prompting, Negative induction, JSON prompt, Attentive Reasoning Queries (ARQ)and Verbal sampling. Rather than covering familiar baselines such as zero or the basic series of ideas, the focus here is on what changes when these techniques are applied. Each is illustrated through side-by-side comparisons on the same task, highlighting the impact on output quality and explaining the underlying mechanism.

Here we set up the minimal environment to interact with the OpenAI API. We load the API key securely at runtime using getpass, initialize the client, and define a lightweight chat wrapper to send system and user prompts to the form (gpt-4o-mini). This keeps our experiment loop clean and reusable while only focusing on quick variations.

The auxiliary functions (partition and divider) are only for formatting the output, making it easier to compare the baseline and enhanced prompts side by side. If you don’t already have an API key, you can create one from the official dashboard here: https://platform.openai.com/api-keys

import json

from openai import OpenAI

import os

from getpass import getpass

os.environ['OPENAI_API_KEY'] = getpass('Enter OpenAI API Key: ')

client = OpenAI()

MODEL = "gpt-4o-mini"

def chat(system: str, user: str, **kwargs) -andgt; str:

"""Minimal wrapper around the chat completions endpoint."""

response = client.chat.completions.create(

model=MODEL,

messages=[

{"role": "system", "content": system},

{"role": "user", "content": user},

],

**kwargs,

)

return response.choices[0].message.content

def section(title: str) -andgt; None:

print()

print("=" * 60)

print(f" {title}")

print("=" * 60)

def divider(label: str) -andgt; None:

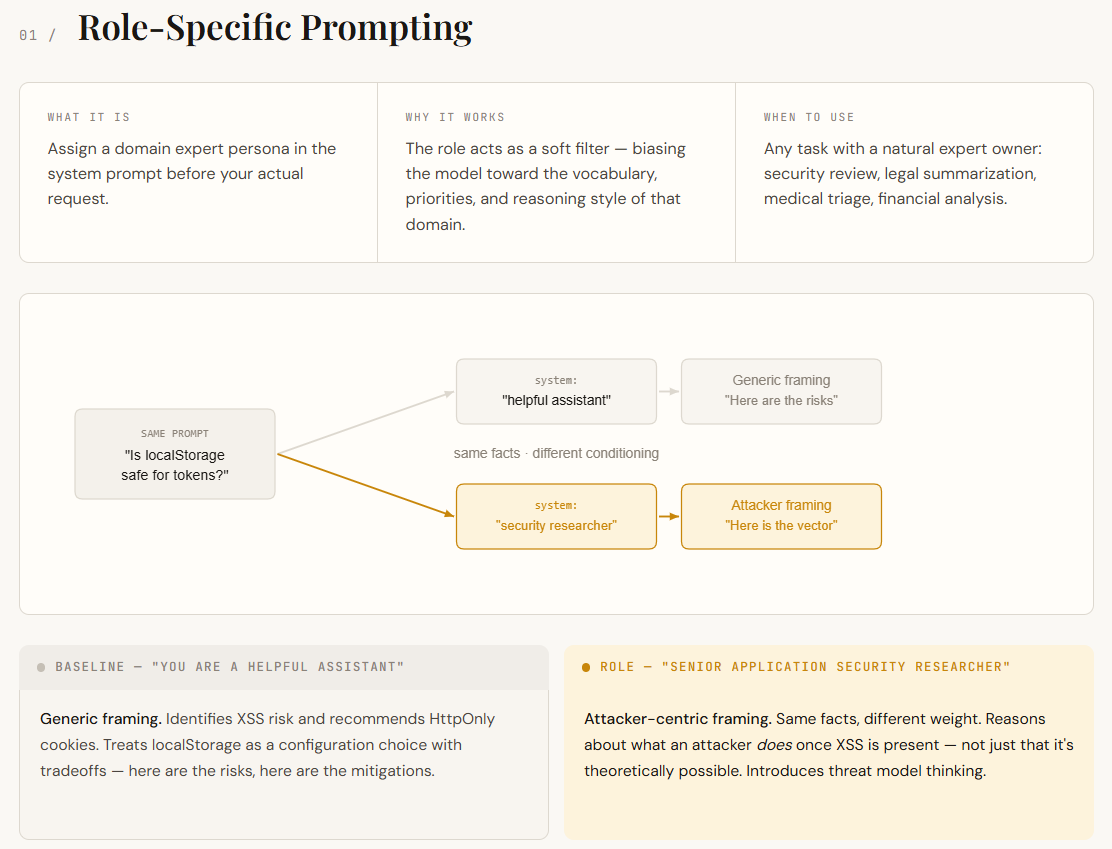

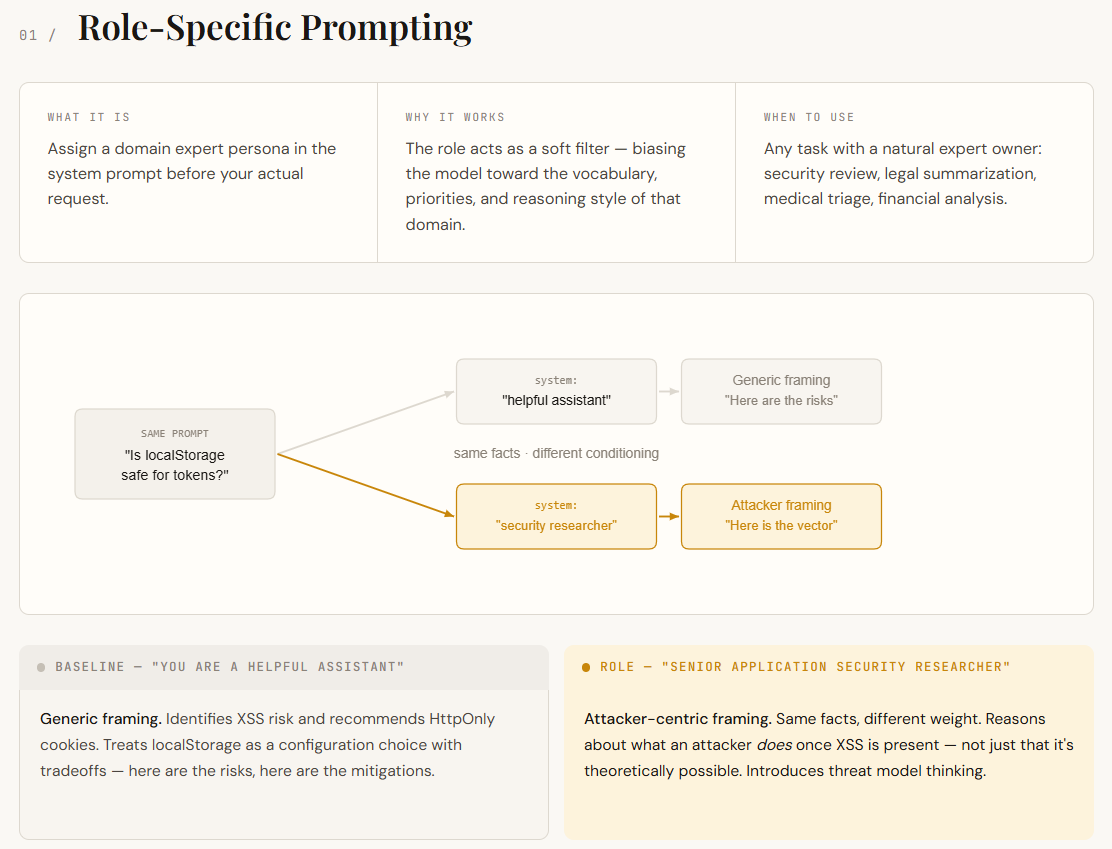

print(f"\n── {label} {'─' * (54 - len(label))}")Language models are trained on a wide range of domains, such as security, marketing, law, engineering, and more. When you don’t specify a role, the model pulls from all of them, resulting in generally correct but somewhat general answers. A role prompt fixes this issue by assigning a persona at the system prompt (for example, “You are a senior application security researcher”). This acts as a filter, prompting the model to respond using the language, priorities, and thinking style of that domain.

In this example, both responses identify XSS risks and recommend HttpOnly cookies – the basic facts are identical. The difference lies in how the model frames the problem. The baseline treats localStorage as a configuration option with some compromises. Role-specific response treats it as an attack surface: it considers what an attacker could do once XSS exists, not just that XSS is theoretically possible. This shift in framing – from “here are the risks” to “here is what the attacker does with these risks” – is the conditioning effect in action. No new information was provided. The prompt just changed which part of the model knowledge was weighted.

section("TECHNIQUE 1 -- Role-Specific Prompting")

QUESTION = "Our web app stores session tokens in localStorage. Is this a problem?"

baseline_1 = chat(

system="You are a helpful assistant.",

user=QUESTION,

)

role_specific = chat(

system=(

"You are a senior application security researcher specializing in "

"web authentication vulnerabilities. You think in terms of attack "

"surface, threat models, and OWASP guidelines."

),

user=QUESTION,

)

divider("Baseline")

print(baseline_1)

divider("Role-specific (security researcher)")

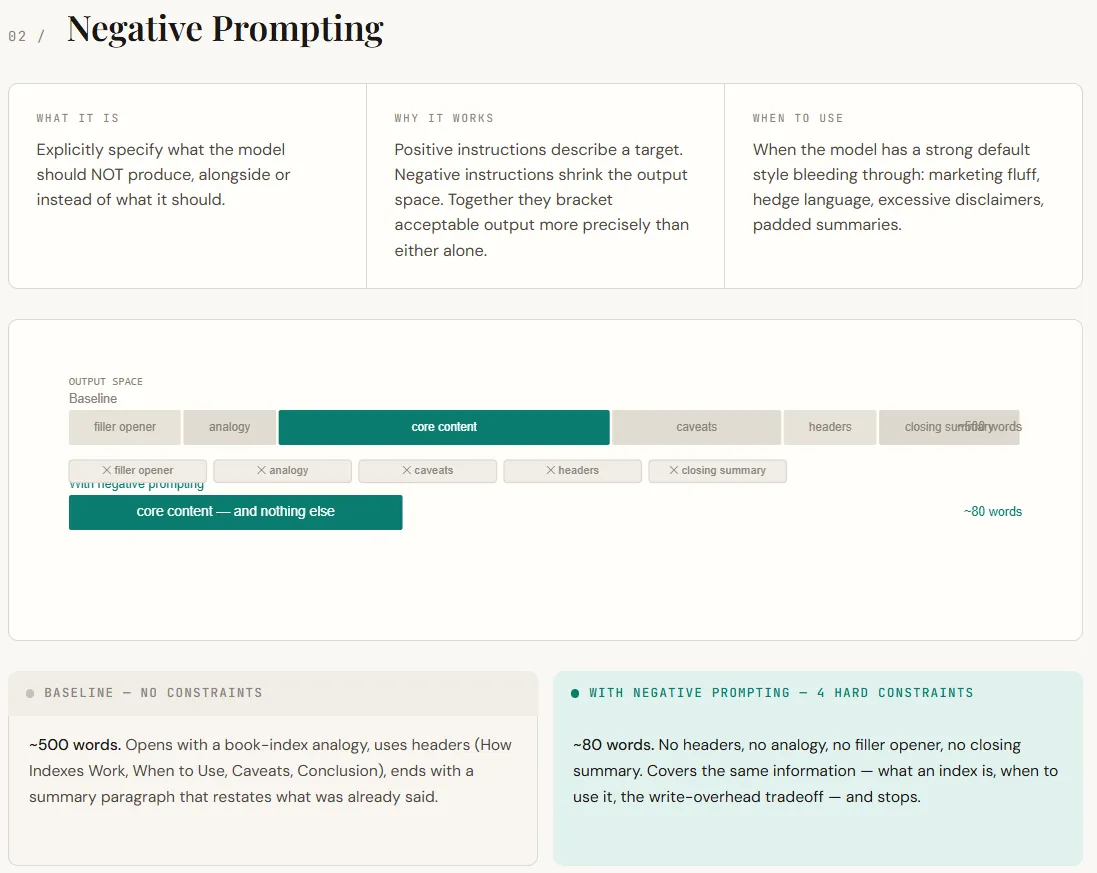

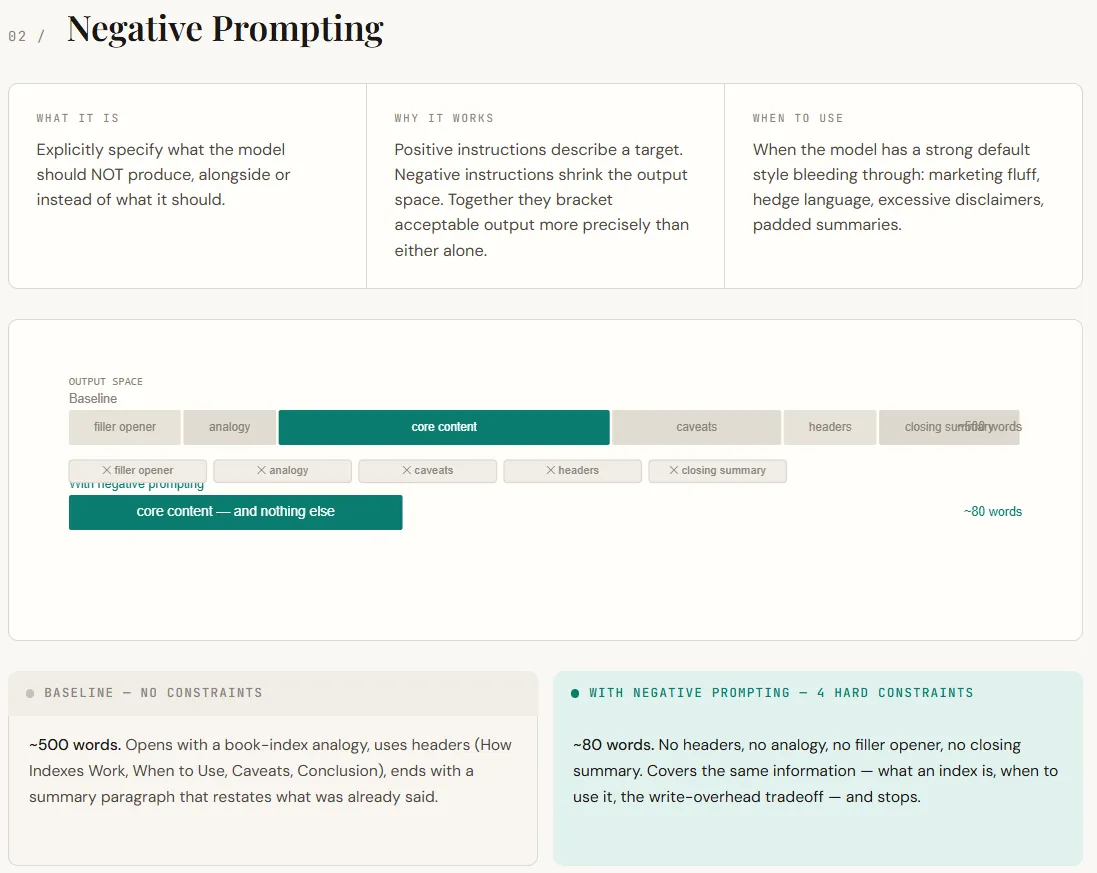

print(role_specific)Negative motivation focuses on telling the model what not to do. By default, LLM students follow the patterns learned during training and RLHF – adding friendly openings, analogies, hedging (“it depends”), and concluding summaries. While this makes the answers seem helpful, it often adds unnecessary noise in technical contexts. The negative prompt works by removing these default settings. Instead of simply describing desired outputs, you can also restrict unwanted behaviors, narrowing the model’s output space and leading to more accurate responses.

At the output, the difference is immediately apparent. The basic response extends to a longer structured explanation with analogies, headings, and redundant inference. Passive copy provides the same basic information in a much shorter form – direct, concise, and without filler. Nothing essential is lost; The prompt simply removes the model’s tendency to over-explain and under-explain the response.

section("TECHNIQUE 2 -- Negative Prompting")

TOPIC = "Explain what a database index is and when you'd use one."

baseline_2 = chat(

system="You are a helpful assistant.",

user=TOPIC,

)

negative = chat(

system=(

"You are a senior backend engineer writing internal documentation.\n"

"Rules:\n"

"- Do NOT use marketing language or filler phrases like 'great question' or 'certainly'.\n"

"- Do NOT include caveats like 'it depends' without immediately resolving them.\n"

"- Do NOT use analogies unless they are necessary. If you use one, keep it to one sentence.\n"

"- Do NOT pad the response -- if you've made the point, stop.\n"

),

user=TOPIC,

)

divider("Baseline")

print(baseline_2)

divider("With negative prompting")

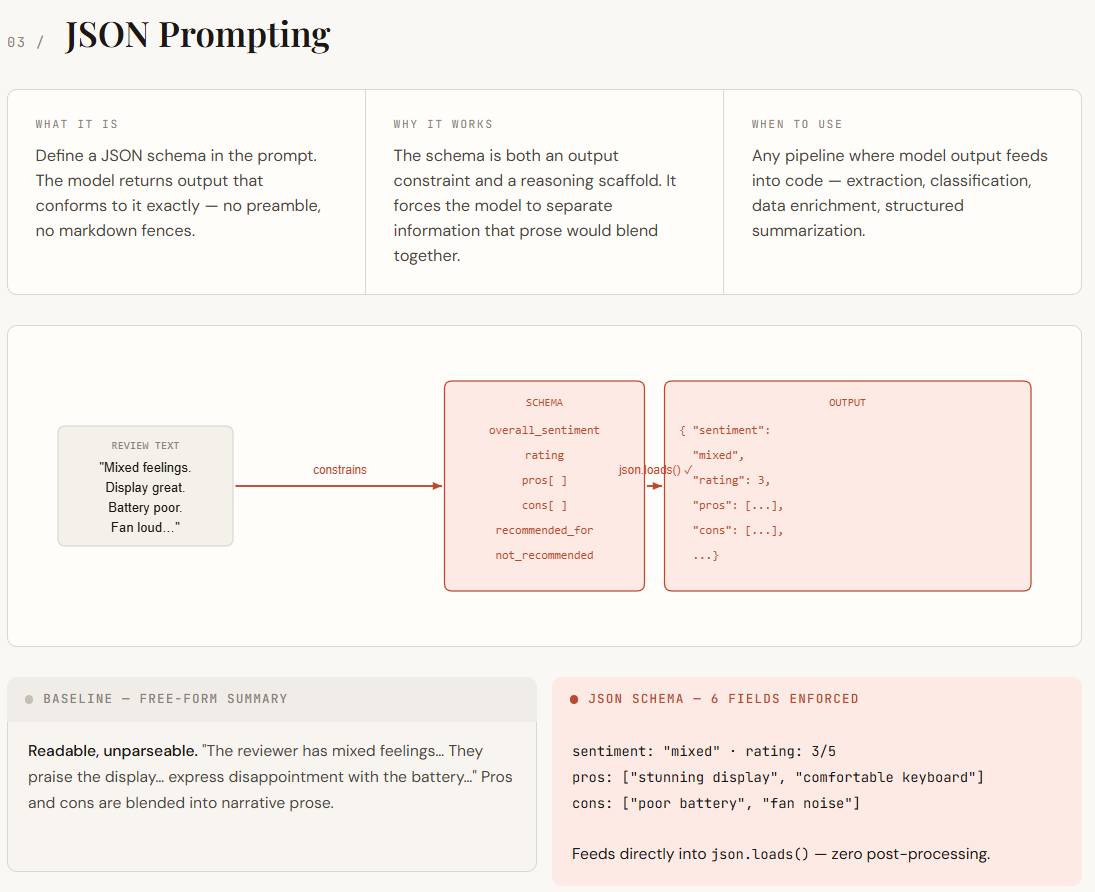

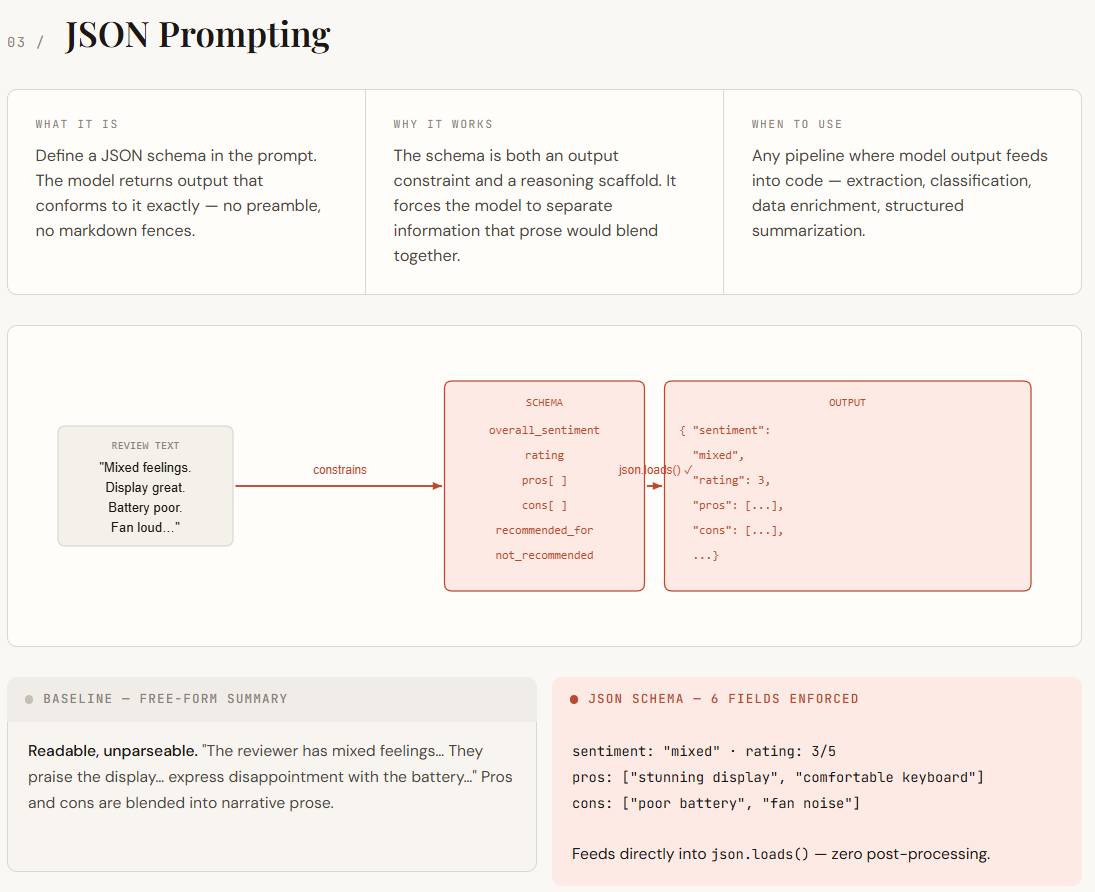

print(negative)JSON prompting becomes important when the LLM output needs to be consumed by code rather than just read by humans. Free-form responses are inconsistent, the structure varies, essential details are included in paragraphs, and small changes in wording break the logic of the analysis. By specifying a JSON schema at the prompt, you can turn the structure into a strict constraint. This not only standardizes the output format, but also forces the model to organize its logic into clearly defined areas such as pros, cons, sentiment, and rating.

In the output, the difference is clear. The basic response is readable but disorganized, as positives, negatives and sentiments are mixed into the narrative text, making it difficult to parse. However, the version requested by JSON returns clean, well-defined fields that can be loaded directly and used in code without any post-processing. Information that was previously included is now clear and discrete, making it easier to store, query, and compare output at scale.

section("TECHNIQUE 3 -- JSON Prompting")

REVIEW = """

Honestly mixed feelings about this laptop. The display is stunning -- easily the best I've

seen at this price range -- and the keyboard is surprisingly comfortable for long sessions.

Battery life, on the other hand, barely gets me through a 6-hour workday, which is

disappointing. Fan noise under load is also pretty aggressive. For light work it's great,

but I wouldn't recommend it for anyone who needs to run heavy software.

"""

SCHEMA = """

{

"overall_sentiment": "positive | negative | mixed",

"rating": ,

"pros": ["", ...],

"cons": ["", ...],

"recommended_for": "",

"not_recommended_for": ""

}

"""

baseline_3 = chat(

system="You are a helpful assistant.",

user=f"Summarize this product review:\n\n{REVIEW}",

)

json_output = chat(

system=(

"You are a product review parser. Extract structured information from reviews.\n"

"You MUST return only a valid JSON object. No preamble, no explanation, no markdown fences.\n"

f"The JSON must match this schema exactly:\n{SCHEMA}"

),

user=f"Parse this review:\n\n{REVIEW}",

)

divider("Baseline (free-form)")

print(baseline_3)

divider("JSON prompting (raw output)")

print(json_output)

divider("Parsed andamp; usable in code")

parsed = json.loads(json_output)

print(f"Sentiment : {parsed['overall_sentiment']}")

print(f"Rating : {parsed['rating']}/5")

print(f"Pros : {', '.join(parsed['pros'])}")

print(f"Cons : {', '.join(parsed['cons'])}")

print(f"Recommended for : {parsed['recommended_for']}")

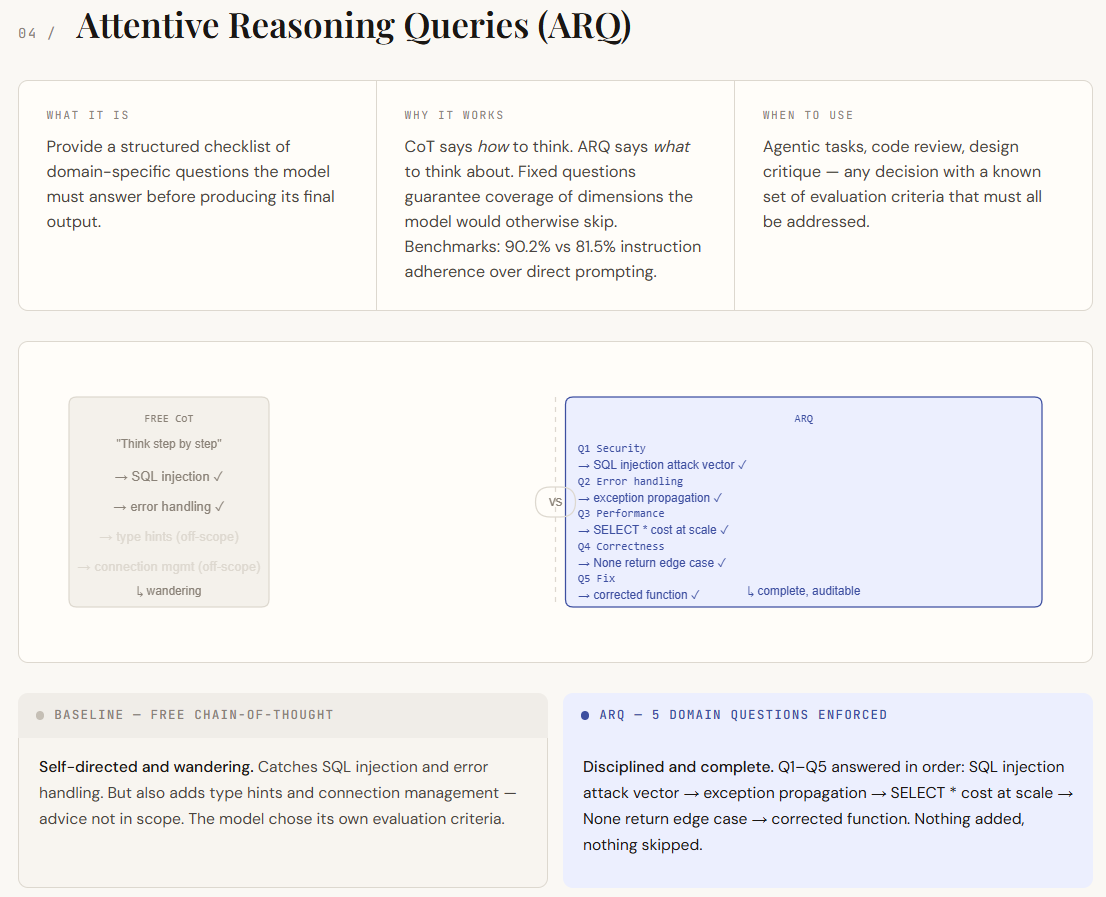

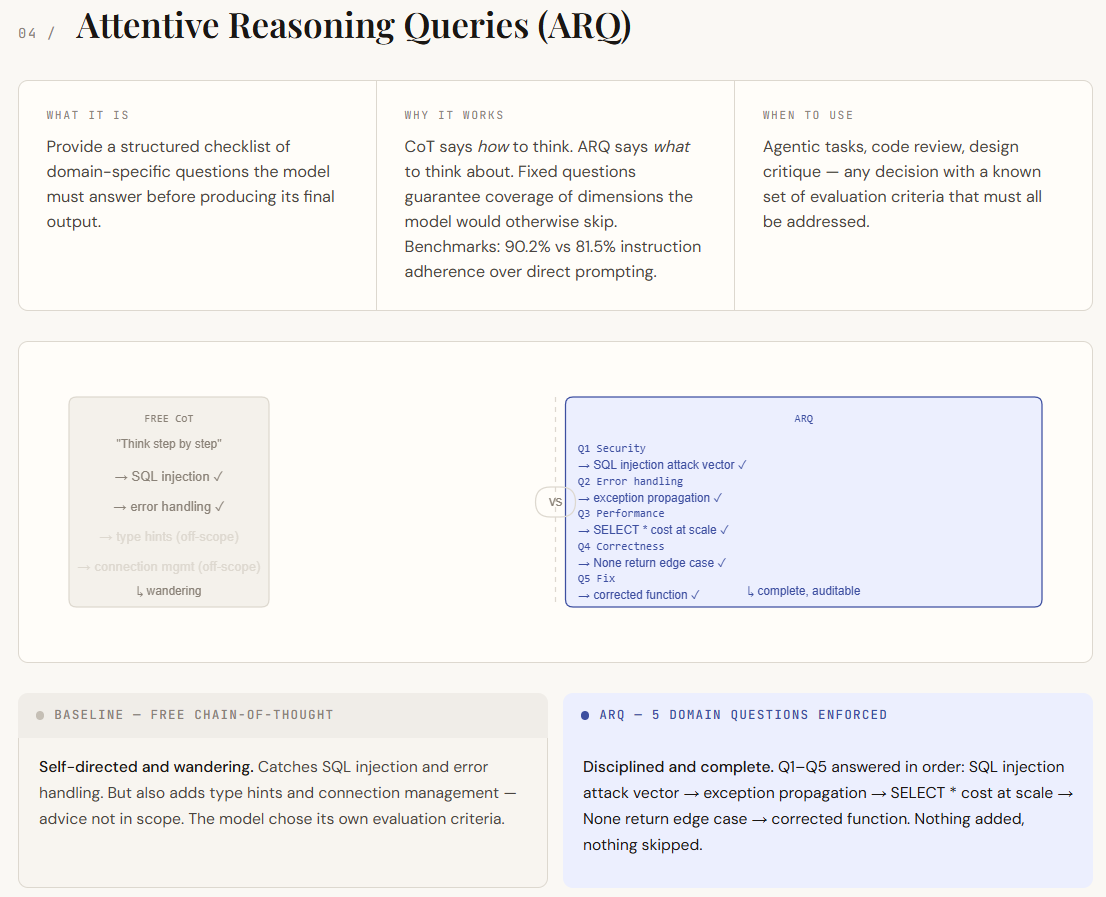

print(f"Avoid if : {parsed['not_recommended_for']}") Attentive reasoning queries (ARQ) rely on stimulating train of thought but eliminate their biggest weakness, which is unstructured reasoning. In standard CoT, the model decides what to focus on, which may lead to gaps or irrelevant details. ARQ replaces this with a fixed set of domain-specific questions that the model must answer in order. This ensures that all important aspects are covered, transferring control from the model to the immediate designer. Rather than simply directing how the model should think, ARQ specifies what it should think.

In directing, the difference is evident in discipline and coverage. The core CoT response identifies key issues but drifts into less important areas and misses deeper analysis in places. However, the ARQ release systematically addresses each point required, clearly isolating vulnerabilities, handling edge cases, and assessing performance implications. Each question acts as a checkpoint, making the response more organized, complete, and easier to proofread.

section("TECHNIQUE 4 -- Attentive Reasoning Queries (ARQ)")

CODE_TO_REVIEW = """

def get_user(user_id):

query = f"SELECT * FROM users WHERE id = {user_id}"

result = db.execute(query)

return result[0] if result else None

"""

ARQ_QUESTIONS = """

Before giving your final review, answer each of the following questions in order:

Q1 [Security]: Does this code have any injection vulnerabilities?

If yes, describe the exact attack vector.

Q2 [Error handling]: What happens if db.execute() throws an exception?

Is that acceptable?

Q3 [Performance]: Does this query retrieve more data than necessary?

What is the cost at scale?

Q4 [Correctness]: Are there edge cases in the return logic that could

cause a silent bug downstream?

Q5 [Fix]: Write a corrected version of the function that addresses

all issues found above.

"""

baseline_cot = chat(

system="You are a senior software engineer. Think step by step.",

user=f"Review this Python function:\n\n{CODE_TO_REVIEW}",

)

arq_result = chat(

system="You are a senior software engineer conducting a security-aware code review.",

user=f"Review this Python function:\n\n{CODE_TO_REVIEW}\n\n{ARQ_QUESTIONS}",

)

divider("Baseline (free CoT)")

print(baseline_cot)

divider("ARQ (structured reasoning checklist)")

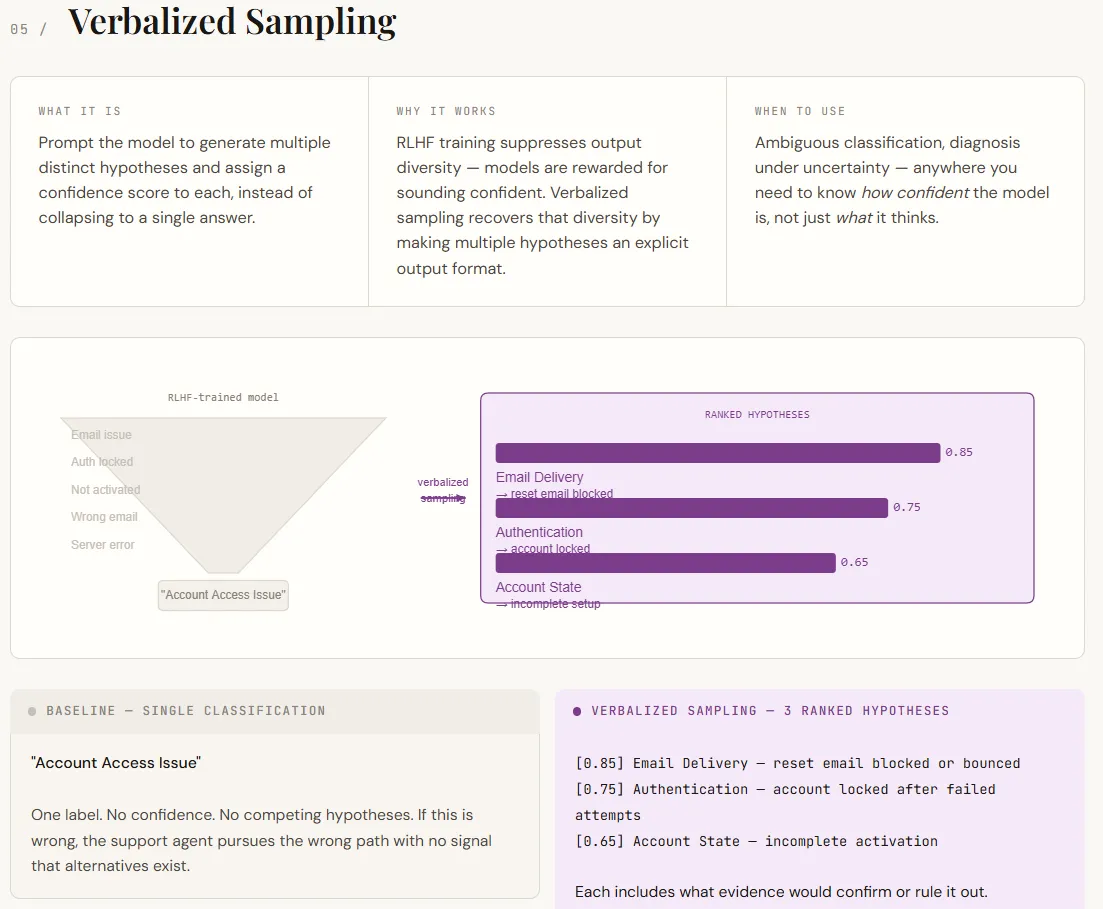

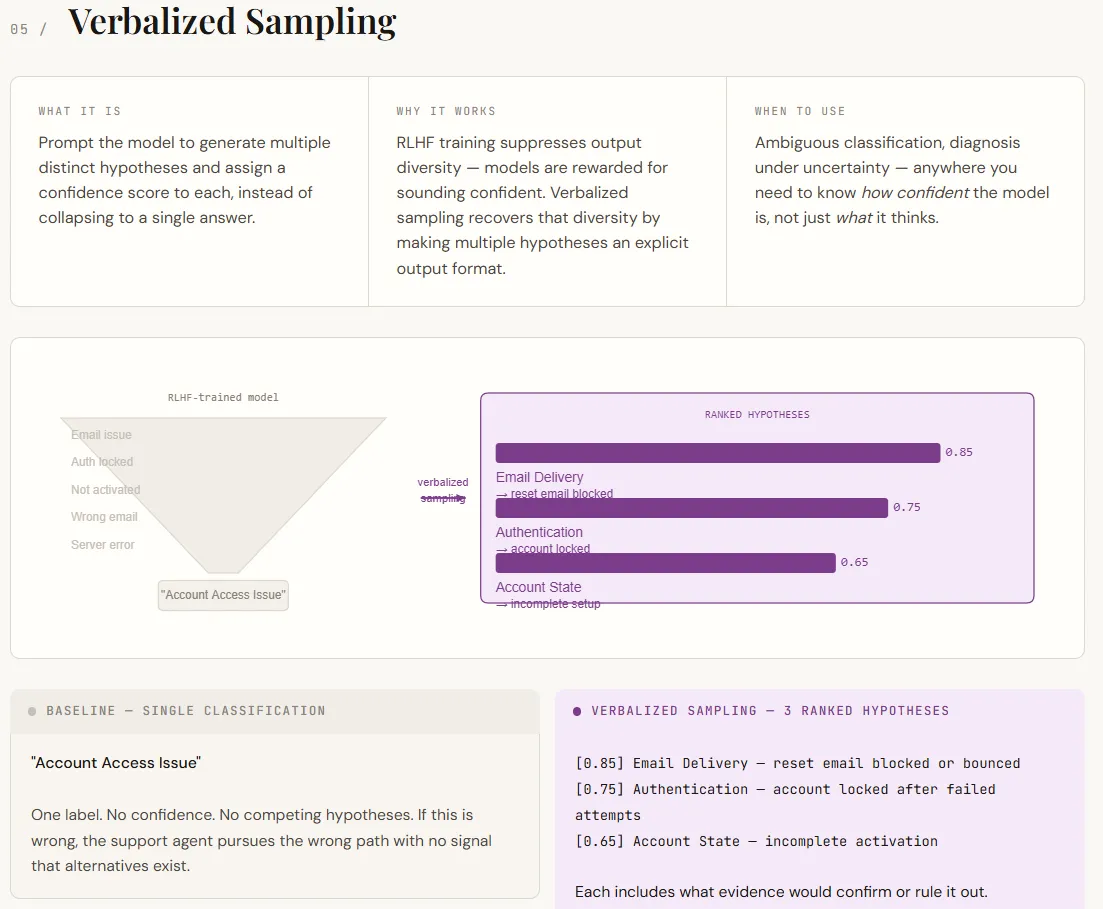

print(arq_result)Verbal samples address one of the major limitations of MBA: they tend to provide one confident answer even when multiple interpretations are possible. This happens because alignment training favors decisive results. As a result, the model hides internal uncertainty. Verbal sampling fixes this problem by explicitly requiring multiple hypotheses, along with confidence ratings and supporting evidence. Instead of forcing a single answer, it highlights a range of plausible outcomes – all within the prompt, without the need for model changes.

In the output, this transforms the result from a single label into a structured diagnostic view. The baseline provides a single rating with no indication of uncertainty. However, the verbal version lists multiple hypotheses in order, each with an explanation and a way to verify or reject them. This makes the output more actionable, turning it into a decision aid rather than just an answer. Confidence scores in themselves do not represent exact probabilities, but effectively indicate relative probability, which is often sufficient for prioritization and final workflow.

section("TECHNIQUE 5 -- Verbalized Sampling")

SUPPORT_TICKET = """

Hi, I set up my account last week but I can't log in anymore. I tried resetting

my password but the email never arrives. I also tried a different browser. Nothing works.

"""

baseline_5 = chat(

system="You are a support ticket classifier. Classify the issue.",

user=f"Ticket:\n{SUPPORT_TICKET}",

)

verbalized = chat(

system=(

"You are a support ticket classifier.\n"

"For each ticket, generate 3 distinct hypotheses about the root cause. "

"For each hypothesis:\n"

" - State the category (Authentication, Email Delivery, Account State, Browser/Client, Other)\n"

" - Describe the specific failure mode\n"

" - Assign a confidence score from 0.0 to 1.0\n"

" - State what additional information would confirm or rule it out\n\n"

"Order hypotheses by confidence (highest first). "

"Then provide a recommended first action for the support agent."

),

user=f"Ticket:\n{SUPPORT_TICKET}",

)

divider("Baseline (single answer)")

print(baseline_5)

divider("Verbalized sampling (multiple hypotheses + confidence)")

print(verbalized)verify Complete codes with notebook here. Also, feel free to follow us on twitter Don’t forget to join us 130k+ ml SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.

Do you need to partner with us to promote your GitHub Repo page, face hug page, product release, webinar, etc.? Contact us