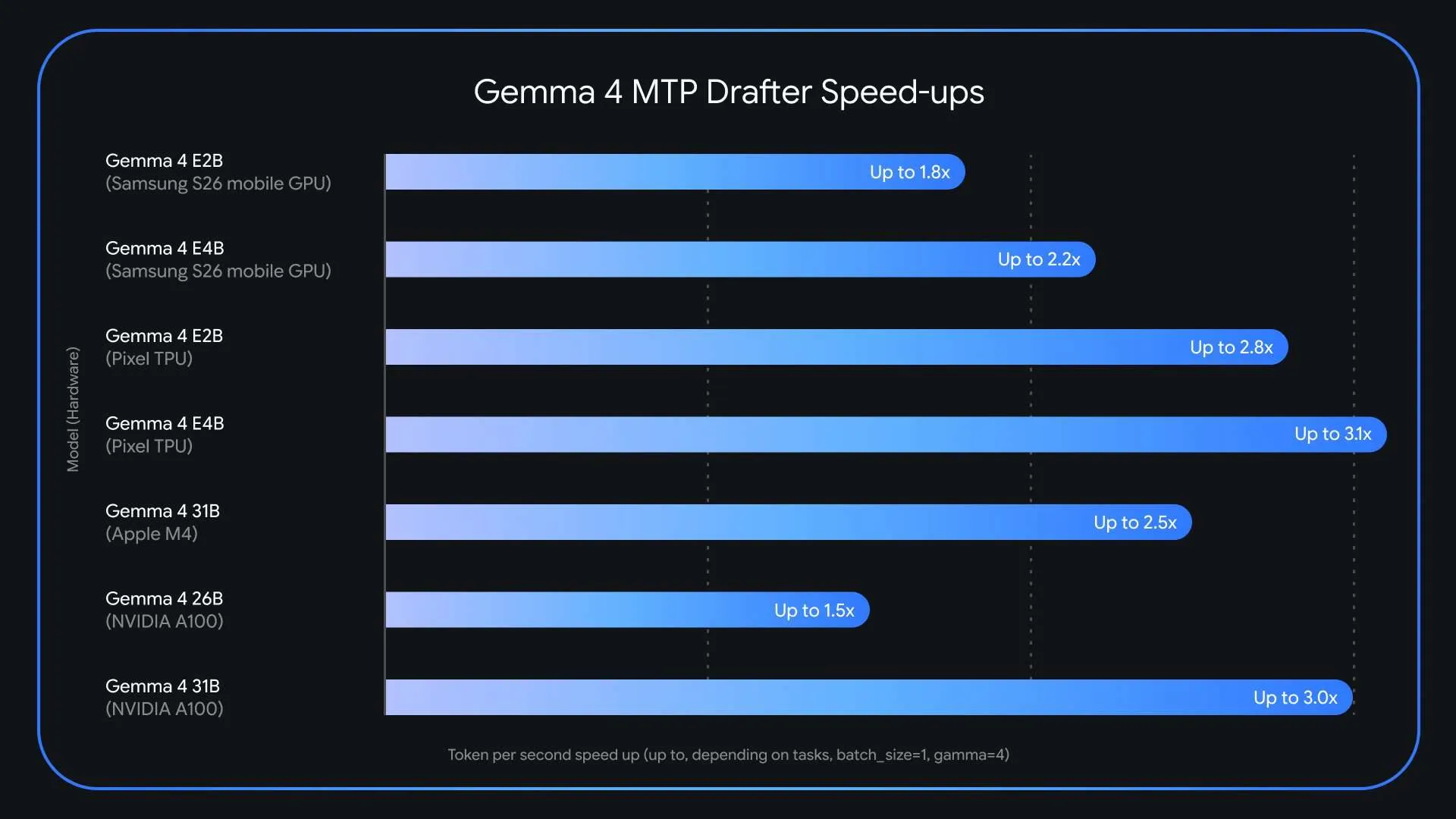

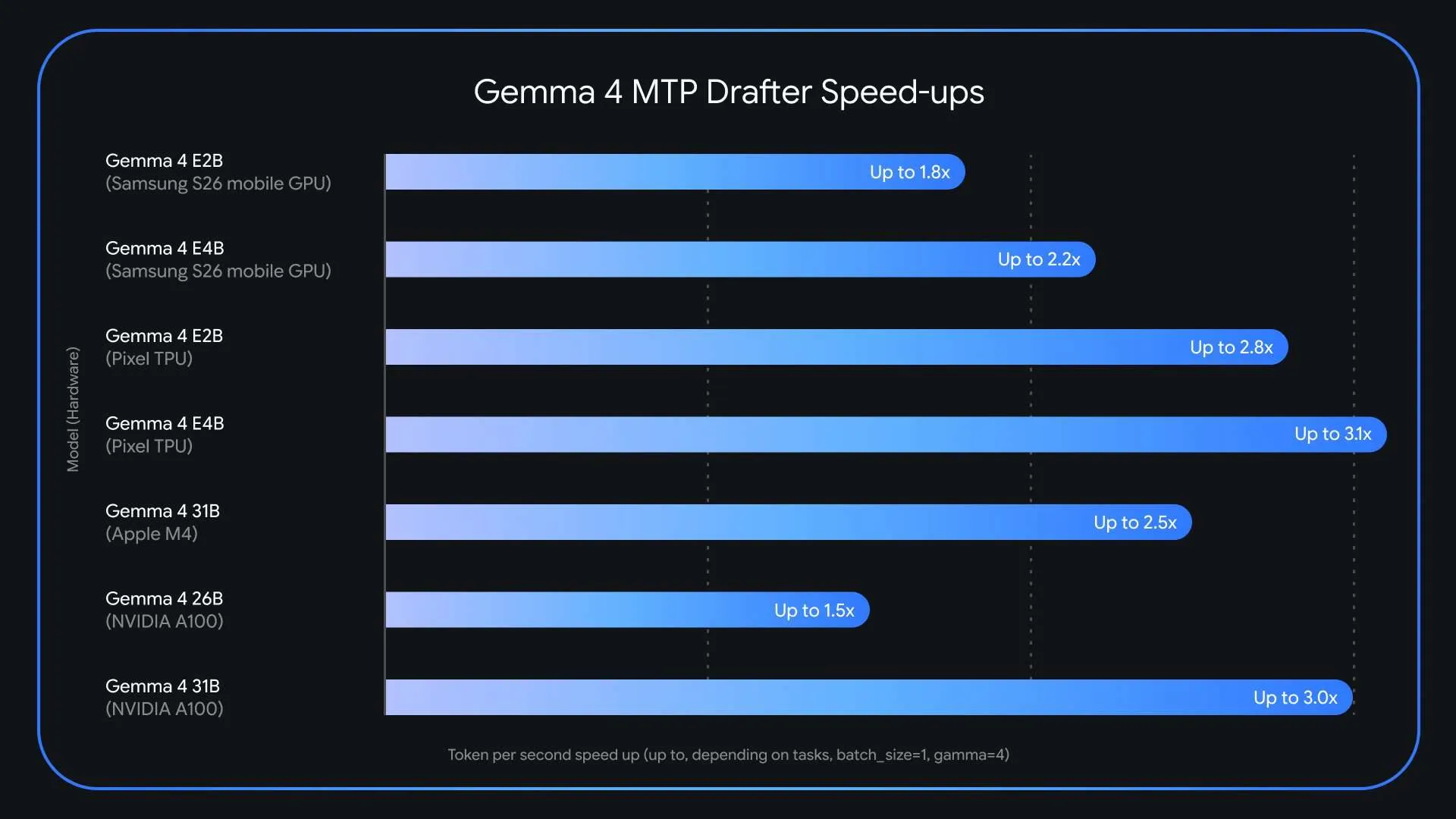

Large language models have become incredibly powerful, but let’s be honest, they are… Speed of inference They are still a huge headache for anyone trying to use them in production. Google just launched Multi-symbol prediction (MTP) modelers. to Gemma 4 Model family. This is a specialist Speculative decoding architecture It can actually triple your speed (3x). Inference timeAll without sacrificing a little Output quality or Logic accuracy. The release comes just weeks after Gemma 4 surpassed 60 million downloads and directly targets one of the most persistent weaknesses in large language model deployment: a memory bandwidth bottleneck that slows token generation regardless of hardware capability.

Why is LLM conclusion slow??

Today’s large linguistic models operate in an autoregressive manner. They produce exactly one symbol at a time, sequentially. Each token generation requires loading billions of model parameters from VRAM (Video Random Access Memory) to computing units. This process is described as being related to memory bandwidth. The bottleneck is not the raw computing power of the GPU or processor, but the speed at which data can be transferred from memory to computing units.

The result is a significant latency bottleneck: compute remains underutilized while the system is only busy transferring data. What makes this particularly ineffective is that the model applies just as much computation to a trivially predictable token as predicting “words” after “Actions speak louder than…” as it does to generate a complex logical inference. There is no mechanism in standard auto-regression decryption to exploit how easy or difficult it is to predict the next token.

What is speculative decryption?

Speculative decryption is the primary technique upon which the MTP designers of Gemma 4 were built. This technique decouples token generation from verification by pairing two models: a lightweight model and a heavy target model.

Here’s how the pipeline works in practice. The fast, small draft model proposes several future tokens in quick succession-a “draft” sequence-in less time than a large target model (e.g., Gemma 4 31B) takes to process even a single token. The target model then verifies all of these proposed tokens in parallel in a single forward pass. If the target model accepts the draft, it accepts the entire sequence – and even generates an additional token of its own in the process. This means that the application can output the entire sequence that has been formulated plus one additional token in roughly the same wall clock time it would normally take to generate just one token.

Because the base Gemma 4 model retains the final verification step, the output is identical to what the target model would have produced on its own, symbol by symbol. There is no quality trade-off – it is lossless acceleration.

MTP: What’s new in the Gemma 4 Drafter architecture

Google has provided several architectural improvements that make Gemma 4 MTP designers particularly effective. Draft models seamlessly use target model activations and share the KV (key-value cache) cache. The KV cache is a standard improvement in transformer inference that stores intermediate interest computations so that they do not need to be recalculated at each step. By sharing this cache, the formulator avoids wasting time recalculating context that the larger target model has already processed.

Additionally, for the E2B and E4B edge models – the smaller Gemma 4 variants designed to run on mobile and edge devices – Google has implemented an efficient pooling technique in the embedding layer. This specifically addresses a prominent bottleneck in peripheral devices: final logarithmic arithmetic, which maps internal model representations to lexical probabilities. The batching method speeds up this step, improving overall generation speed on hardware-constrained machines.

For hardware-specific performance, the Gemma 4 26B Mixed Expert (MoE) model presents unique routing challenges on Apple Silicon with a batch size of 1. However, increasing the batch size to between 4 and 8 unlocks up to a 2.2x local speedup. Similar batch size-dependent gains were observed on NVIDIA A100 devices.

Key takeaways

- Google has released the Multi-Token Prediction Formulation (MTP) tool for the Gemma 4 family of models, providing up to 3x faster inference speeds without any degradation in output quality or inference accuracy.

- The MTP authors use a speculative decoding architecture that combines a lightweight formulation model with a heavy target model – the formulation proposes several tokens at once, and the target model verifies them all in a single forward pass, breaking the bottleneck of holding one token at a time.

- Draft models share the KV cache and activations of the target model, and for E2B and E4B edge models, an efficient pooling technique in the modulator addresses the final bottleneck of logarithm computation – enabling faster generation even on memory-limited devices.

- MTP modelers are now available under the Apache 2.0 license, with model weights on Hugging Face and Kaggle.

verify Typical weights and Technical details. Also, feel free to follow us on twitter Don’t forget to join us 130k+ ml SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.

Do you need to partner with us to promote your GitHub Repo page, face hug page, product release, webinar, etc.? Contact us