Mistral AI is building one of the most practical coding agent ecosystems in the open source AI/weights space, and is shipping its most significant infrastructure upgrade to date. The Mistral team announced remote agents in Vibe, its coding agent platform, along with a public preview of Mistral Medium 3.5 – a new 128B density model that now serves as a default model in both Vibe and Le Chat, Mistral’s consumer assistant.

What is a vibe, and why is it important?

If you haven’t used it yet, Mistral Vibe is a coding agent accessible through a command-line interface (CLI) that lets an AI model work through software tasks for you – writing code, refactoring modules, creating tests, investigating CI failures, and more. Think of it as a junior developer who never gets tired and can work through your code base.

Until now, Vibe sessions were managed locally, meaning the proxy was tied to your laptop and terminal. This is changing today.

Remote Agents: The agent runs while away

So, your coding sessions can now work through long tasks while you’re away. Many can run in parallel, and you won’t be the bottleneck in every step the customer takes.

This is a major behavioral shift. Instead of attending a programming session at your terminal, you can start a task and let the cloud handle the rest. You can start cloud agents from the Mistral Vibe CLI or from Le Chat. While it’s running, you can examine what the agent is doing, with file differences, tool calls, progress statuses, and questions popping up as you go.

One particularly useful feature for developers already in the middle of a session: persistent local CLI sessions can be moved to the cloud when you want to leave them running, relaying session history, task status, and approvals. So you don’t lose your place – just move the work from your device.

Each session works separately. Each programming session runs in an isolated environment, including extensive editing and installation processes. When the work is done, the agent can open a pull request on GitHub and notify you, so you can review the result instead of every keystroke you produce.

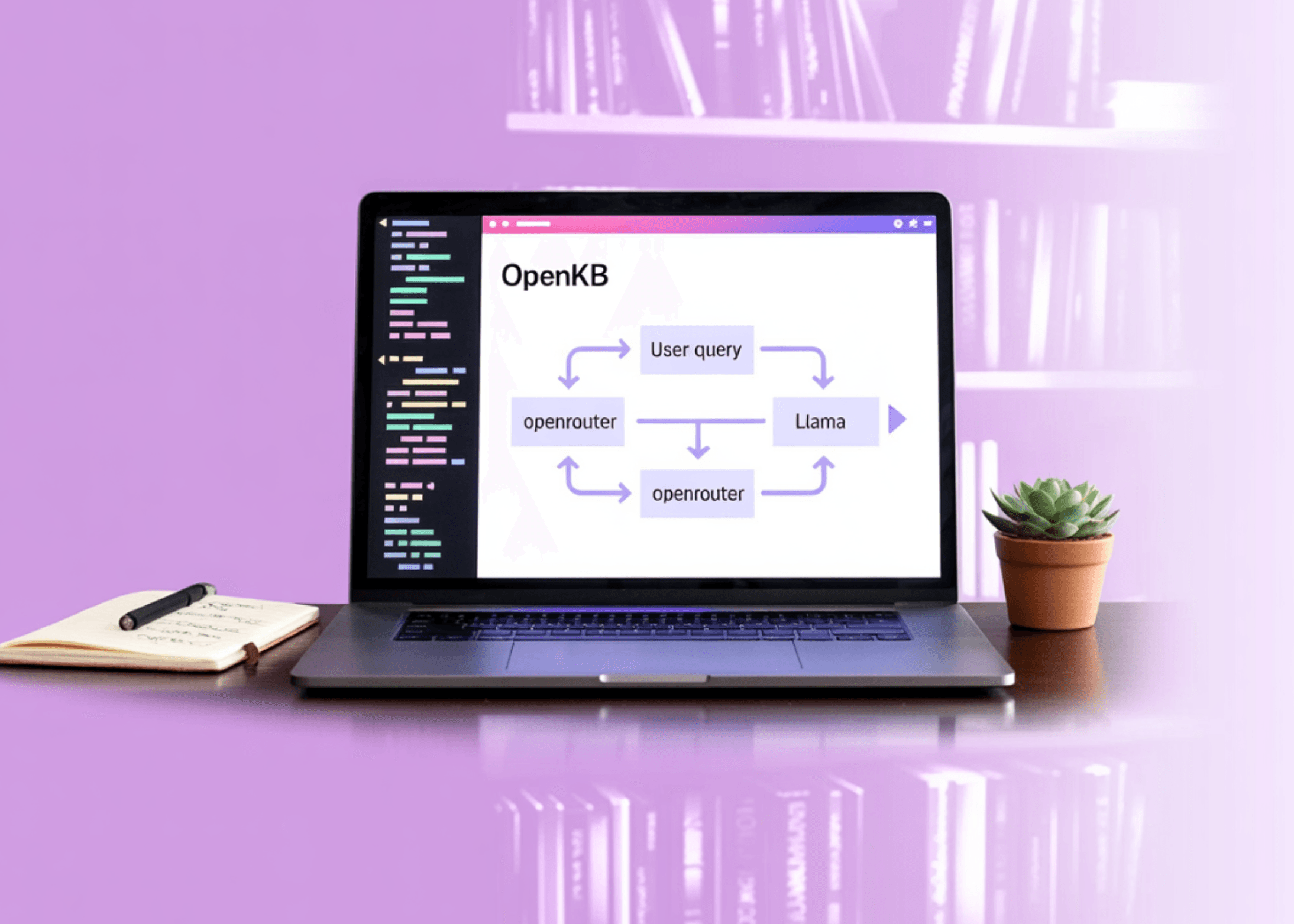

It’s also helpful to understand the logic behind how Vibe connects to Le Chat. Mistral uses Mistral Studio’s curated workflows to bring Mistral Vibe to Le Chat – originally designed for their internal programming environment, then for enterprise clients, and now open to everyone. This means that Le Chat’s remote coding agent is not a standalone feature – it’s built on top of Mistral’s orchestration layer, which is useful context if you’re thinking about how to design similar agent systems yourself.

On the integration side, Vibe connects to GitHub for code and pull requests, Linear and Jira for issues, Sentry for incidents, and apps like Slack or Teams for reporting.

Mistral Medium 3.5: The model behind everything

None of this would be practically possible without a capable underlying AI model. This new model released is Medium Mistral 3.5The Mistral team describes it as its first major compact model.

It is a dense 128B model with a 256KB context window, and handles instruction follow-up, reasoning, and coding in a single set of weights. For context, a 256KB context window means the model can process roughly 200,000 words in a single pass – long enough to think through an entire large code base.

The model is also multimodal. The Mistral team trained the vision encoder from scratch to handle variable image sizes and aspect ratios – a notable architectural choice. Most vision language models reuse pre-trained encoders such as CLIP, so building this component from scratch suggests Mistral prioritizes flexibility in how the model handles real-world image inputs rather than adhering to fixed accuracy assumptions.

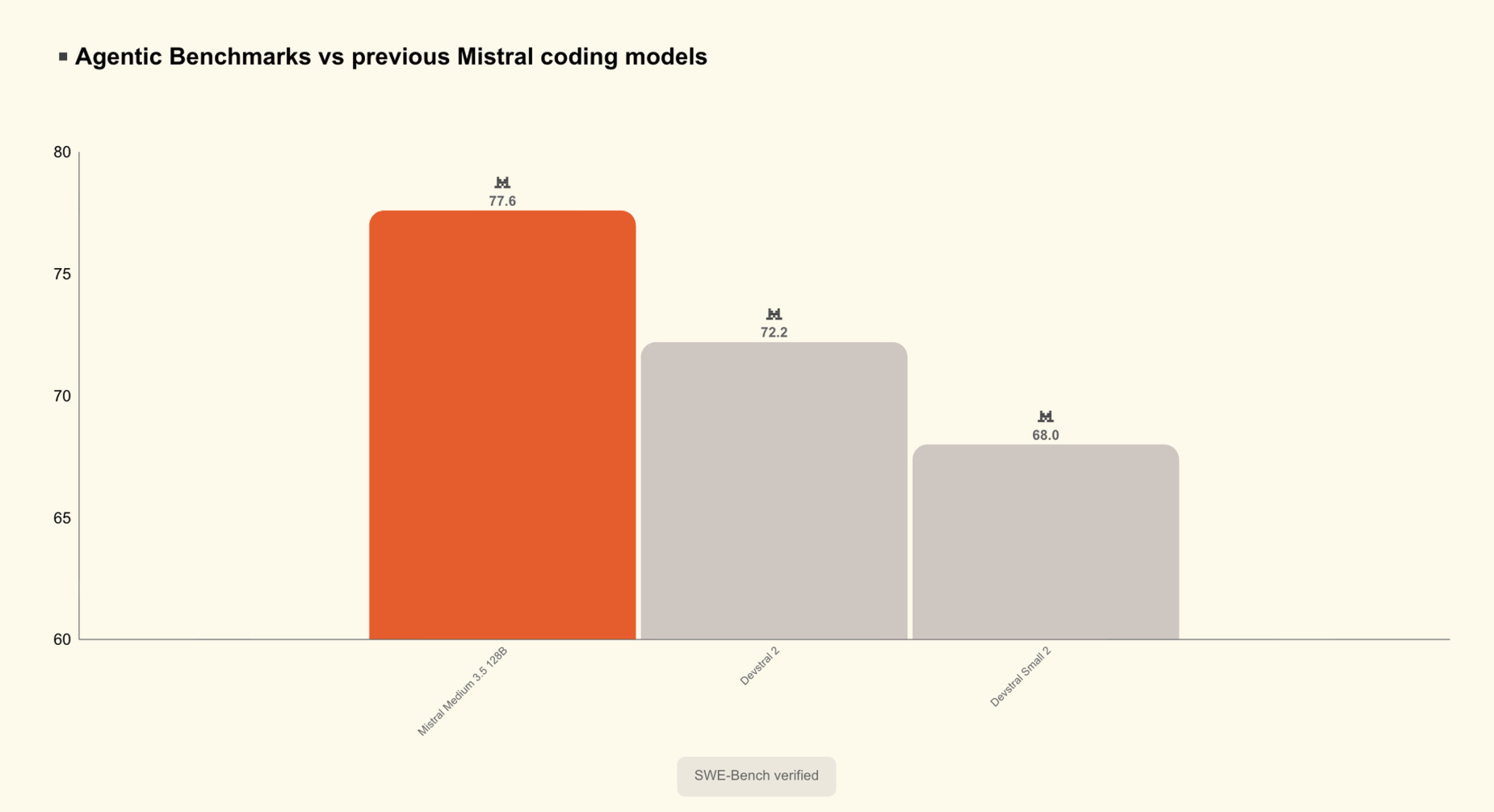

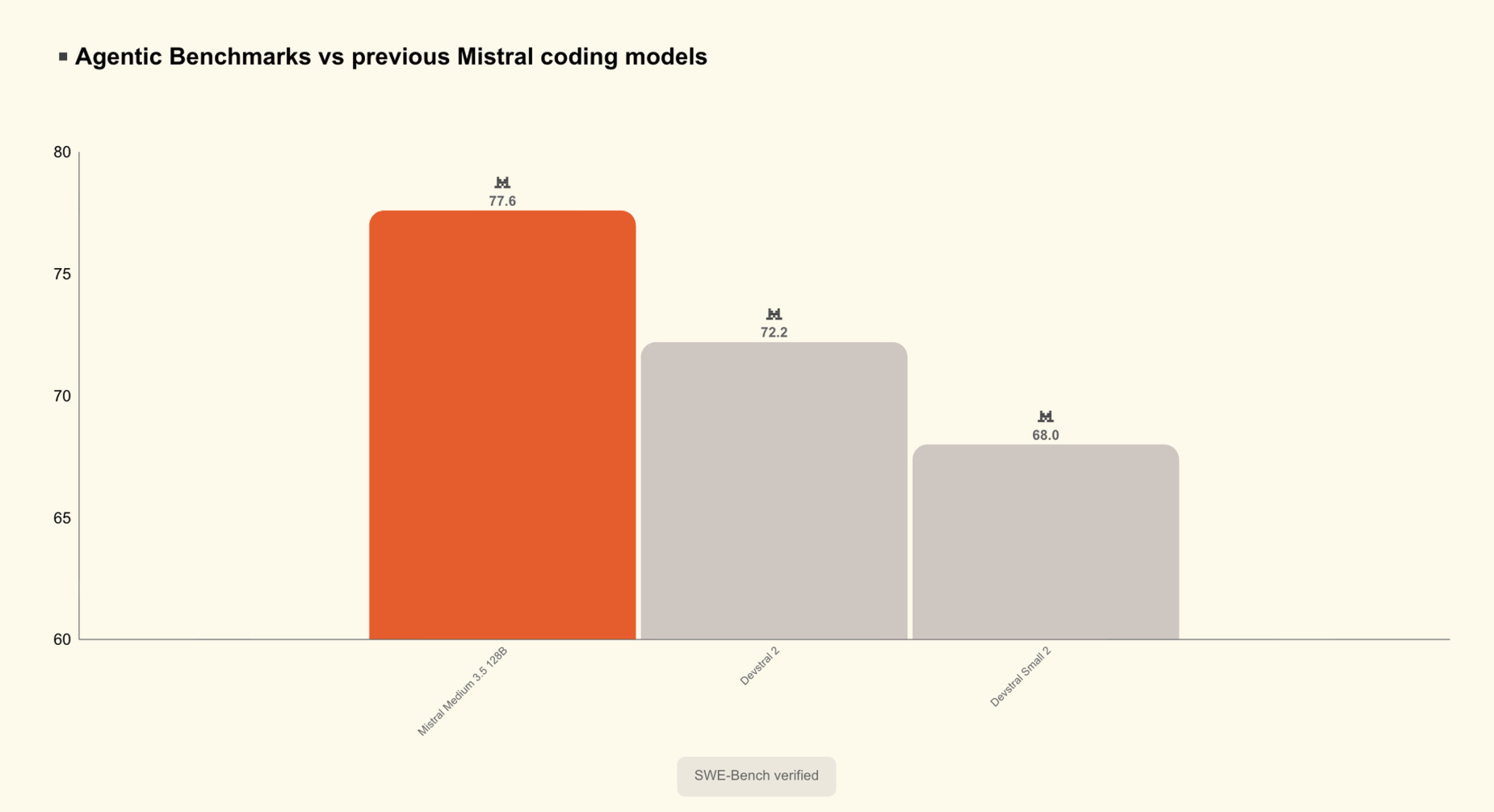

The Mistral Medium 3.5 scored 77.6% on the SWE-Bench Verified test, ahead of the Devstral 2 and models like the Qwen3.5 397B A17B. SWE-Bench Verified is a benchmark that tests whether a model can solve real-world GitHub issues from popular open source repositories – one of the most reliable proxies for practical software engineering capability. The model also scored 91.4 points on τ³-Telecom and has strong proxy capabilities.

One particularly interesting design choice is that inference effort is now configurable per request, so that the same model can respond to a quick chat reply or work through running a complex agent. This is important for developers integrating the model via API – you can reduce the computation for simple searches and dial it up for multi-step reasoning tasks, without switching models.

The model is built for long-running tasks, calling multiple tools reliably, and producing structured output that can be consumed by final code.

Business mode in Le Chat: New agent layer

In addition to the coding agent upgrades, Mistral is also shipping Le Chat’s Action Mode – a new agent mode for more general, multi-step tasks. Action Mode is a powerful new agent mode for complex missions in Le Chat, powered by a new belt and Mistral Medium 3.5. The agent becomes the execution backend for the assistant itself, so Le Chat can read, write, use multiple tools at once, and work through multi-step projects until it completes what you asked.

In practice, this means things like workflow across tools – email, messaging, and calendar follow-ups; Preparing for a meeting with relevant context taken from multiple sources; Or sort your inbox and create Jira issues from team discussions.

In action mode, connectors are turned on by default rather than manually selected, giving the agent access to documents, mailboxes, calendars, and other systems for the rich context it needs to take the right action. This is a huge shift in usability from typical chat assistants, where you manually select tools before each session.

Transparency is a built-in feature and not an afterthought: every action the agent takes is visible – you see every tool call and reasoning basis. Le Chat will ask for explicit consent – based on your permissions – before continuing sensitive tasks like sending a message, writing a document, or modifying data.

Key takeaways

Here are the main takeaways:

- Mistral Medium 3.5 is now the default model in both Vibe and Le Chat – A dense 128B model with a 256KB context window scores 77.6% in the SWE-Bench Verified test, outperforms Devstral 2 and Qwen3.5 397B A17B, and is available as open weights on Hugging Face.

- Vibe coding agents now run in the cloud – Sessions can be created from the CLI or Le Chat, run asynchronously in isolated sandboxes, and local sessions can be teleported to the cloud without losing session history or task state.

- The new work mode in Le Chat provides parallel and multi-step execution of agent tasks – Powered by Mistral Medium 3.5, it can work across email, calendar, documents, Jira, and Slack simultaneously, with all tool calls, inference steps, and explicit approval required before sensitive actions visible.

- The reflection effort in Mistral Medium 3.5 can be configured as requested by the API – The same model handles lightweight chat responses and complex long-running agent runs.

verify Typical weights at high frequency and Technical details. Also, feel free to follow us on twitter Don’t forget to join us 130k+ ml SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.

Do you need to partner with us to promote your GitHub Repo page, face hug page, product release, webinar, etc.? Contact us