The bottleneck in building better AI models has never been compute alone, it has always been data quality. Meta AI’s RAM (Inference, Alignment, Memory) team is now addressing this bottleneck head-on. Researchers have submitted a meta Automatic dataa framework that uses AI agents in the role of an independent data scientist, tasked with building, evaluating, and improving training and evaluation datasets iteratively – without relying on costly human annotation at every step.

The results, tested on complex scientific reasoning problems, show that this approach not only matches classical synthetic data generation methods, but significantly outperforms them.

Why has creating synthetic data always been so difficult?

To understand what Autodata solves, you need to understand how AI training data is typically generated today.

Most modern AI systems start with human-written data. As the models improved, researchers began to supplement this with… Synthetic data – Data generated by the model itself. Synthetic data are attractive because they can generate rare marginal cases, reduce the cost of manual labeling, and produce more challenging examples than are naturally found in public texts.

The dominant approach to generating synthetic data has been Self-instructions – Require a large language model (LLM) using zero or few snapshot examples to generate new training samples. Self-directed grounding Methods expanded this by basing production on documents and other sources to reduce hallucinations and increase diversity. CoT self-instructions (Chain of Thought Self-Instruction) is taken further by using Chain of Thought thinking during creation to more accurately create more complex tasks. Recently, Self-challenge methods. Allowing the competing agent to interact with the tools before proposing a task and its accompanying evaluation functions – the closest predecessor to what Autodata does.

The problem? None of these approaches give researchers a feedback-based way to actually control or improve data quality iteratively during the generation process itself. You could filter, evolve or enhance the data after the fact – but the generation pipeline remained largely static and single-pass.

Automatic data changes that.

What automatic data actually does

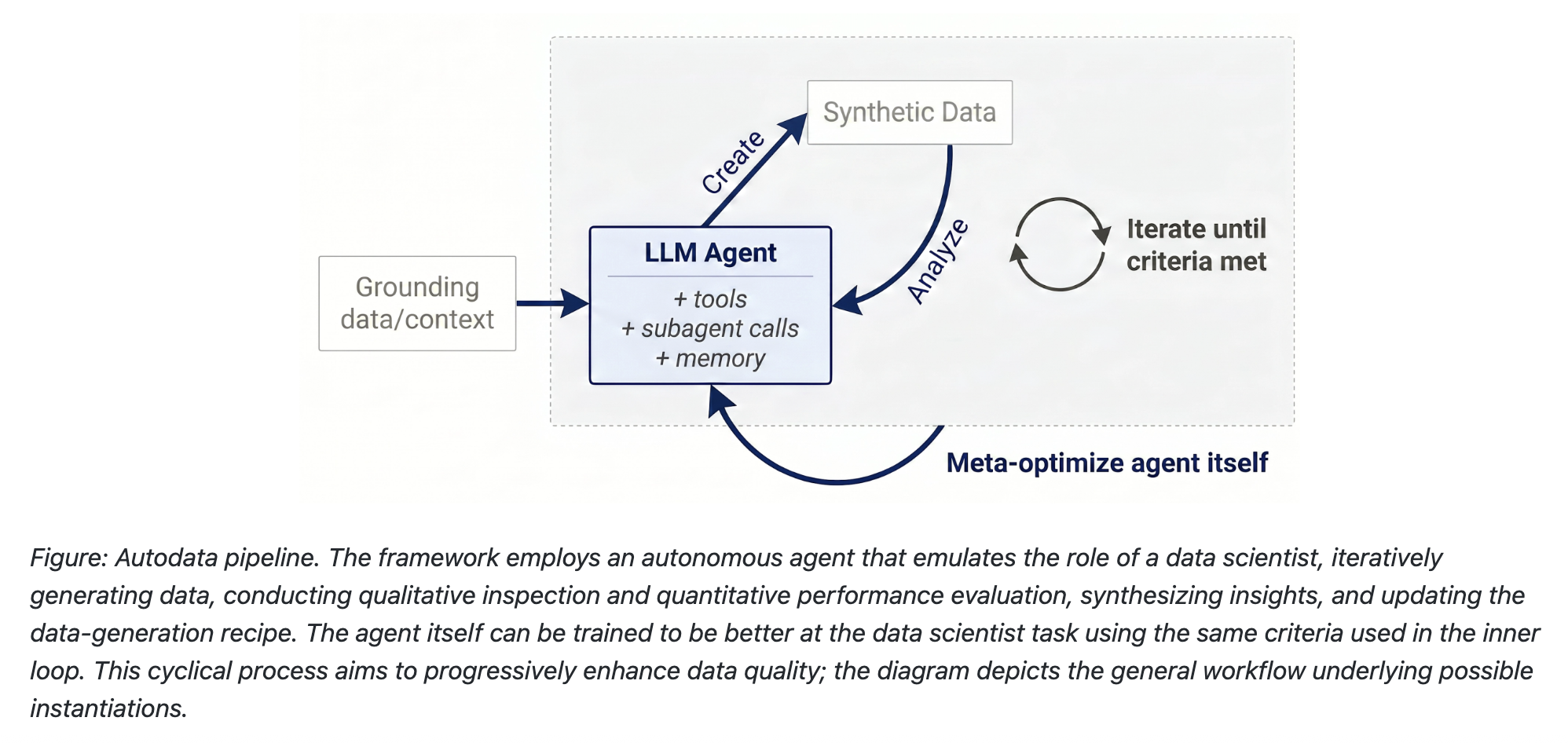

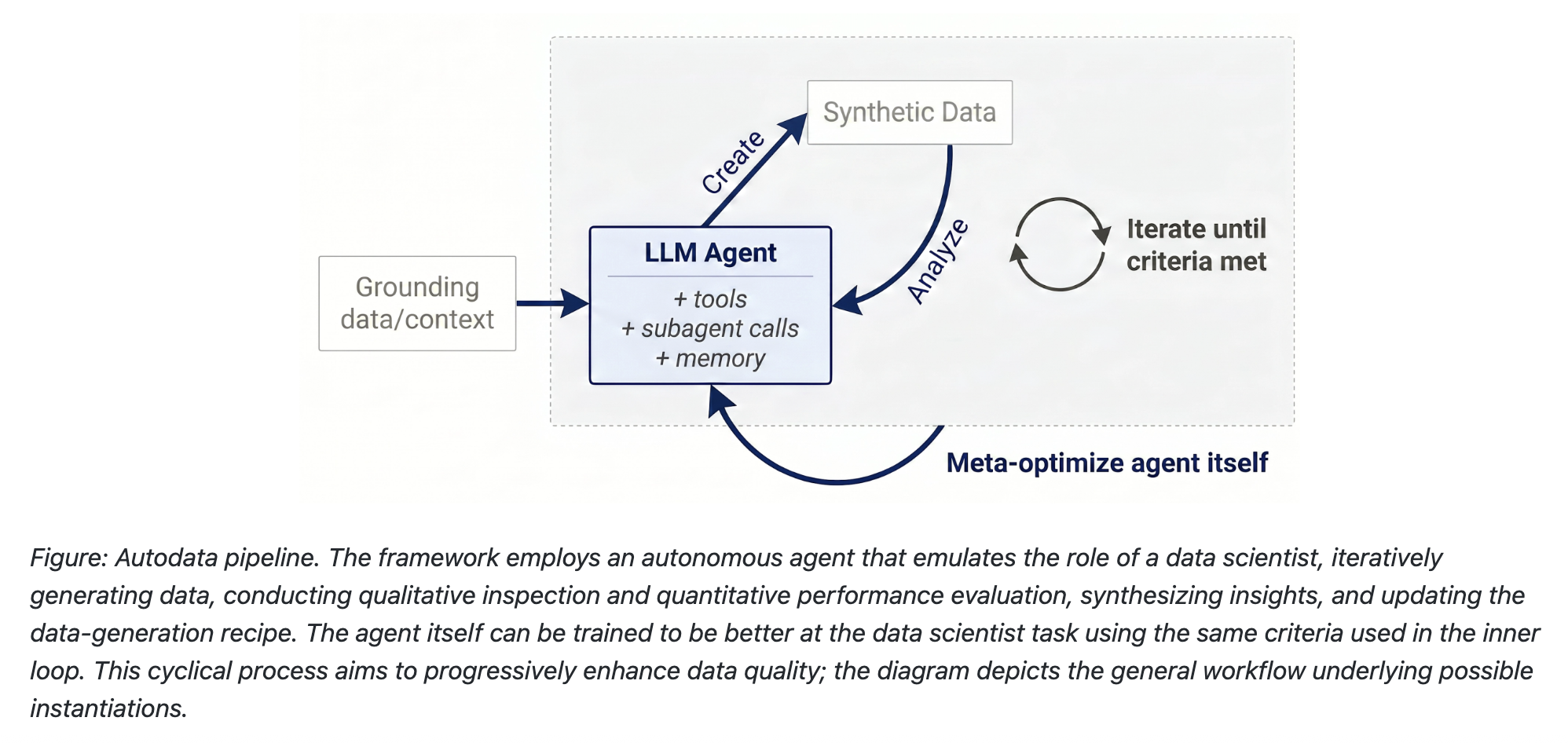

Data automation is a method that allows AI agents to act as data scientists who iteratively build high-quality training and evaluation data. Instead of generating data in a single pipeline, the agent runs a closed-loop pipeline modeled after the way a human data scientist actually works:

- Data creation – The agent builds on the provided source documents (research papers, code, legal text, etc.) and uses the tools and skills learned to create examples for training or evaluation.

- Data analysis -Then the agent checks what he has created: Is this example correct? High quality? Challenging enough? It aggregates lessons learned at the example level, and ultimately at the dataset level (is it diverse? Does it improve the model when used as training data?).

- Repetition – Using these lessons learned, the agent updates its data generation recipe and iterates again to create better data. This continues until the stopping criterion is met.

Proxy data generation provides a way to Transforming incremental inference computation into high-quality model training. The more inference computation you provide to an agent, the better the data it produces-a key idea for practitioners managing computing budgets.

Specific implementation: agent self-direction

The initial creation of Meta is called for automatic data Self-directing agentIts structure is designed around a master coordinator (LLM) that coordinates four specialized sub-agents:

- Challenger LLC – Create a training example (input + response pair) based on a detailed prompt from the master agent

- Weak halal – A smaller, less capable model that is generally expected to fail in the generated example

- Strong halal – A more capable model that is generally expected to succeed

- Investigator/judge – Evaluate whether the output of each solution meets quality standards, using evaluation models created by Challenger LLM

Important design note: The weak and strong solution can be the same LLM operating in different modes. For example, the robust version could be allowed to use increased inference time computation including scaffolding or pooling, as well as access to privileged information – giving practitioners flexibility in how they define capability separation.

Acceptance criteria are precise and multi-conditional. For an example to be accepted into the dataset, All four must have the following:

- The Quality Verifier (QV) should pass the example

weak_avg ≤ 65%andmax_weak ≤ 75%With zero degreesstrong_avg ≥ 60%andstrong_avg andlt; 95%-Ensuring that the question is neither too difficult for everyone nor too easy for the strong solver- gap

strong_avg − weak_avg ≥ 20%

If none of these limits are met, the master agent sends targeted feedback to the challenger and tries again – from a different angle of thought. This loop typically runs several rounds per paper (average 3-5) before producing an acceptable question or exhausting its step budget.

Numbers that matter

The quality gains compared to standard self-paced CoT instruction are measurable and significant.

Under CoT Self-Instruct, both solutions obtained almost identical results – weak at 71.4% and strong at 73.3%, a difference of only 1.9 percentage points – showing that single-answer questions fail to find tasks that are difficult enough for either model. Agent Self-Instruct lowers the weak score to 43.7% while raising the strong score to 77.8%, widening the gap to 34 points. The proxy data generation loop produces questions that specifically reward the capabilities of the stronger model, rather than questions that both models can answer equally well.

The dataset itself was produced by processing over 10,000 CS sheets from the S2ORC (2022+) collection, producing 2,117 QA pairs that met all quality constraints and performance gap requirements.

When Qwen-3.5-4B was then trained with GRPO for approximately one period (batch size 32, learning rate 1e-6) on the Agent Self-Instruct data versus the CoT Self-Instruct data – using Kimi-K2.6 as a reward model to score responses against the generated rubrics – the model trained on the agent data showed a clear advantage on both in-distribution and out-of-distribution test sets.

Meta-optimization: Teaching an agent to be a better data scientist

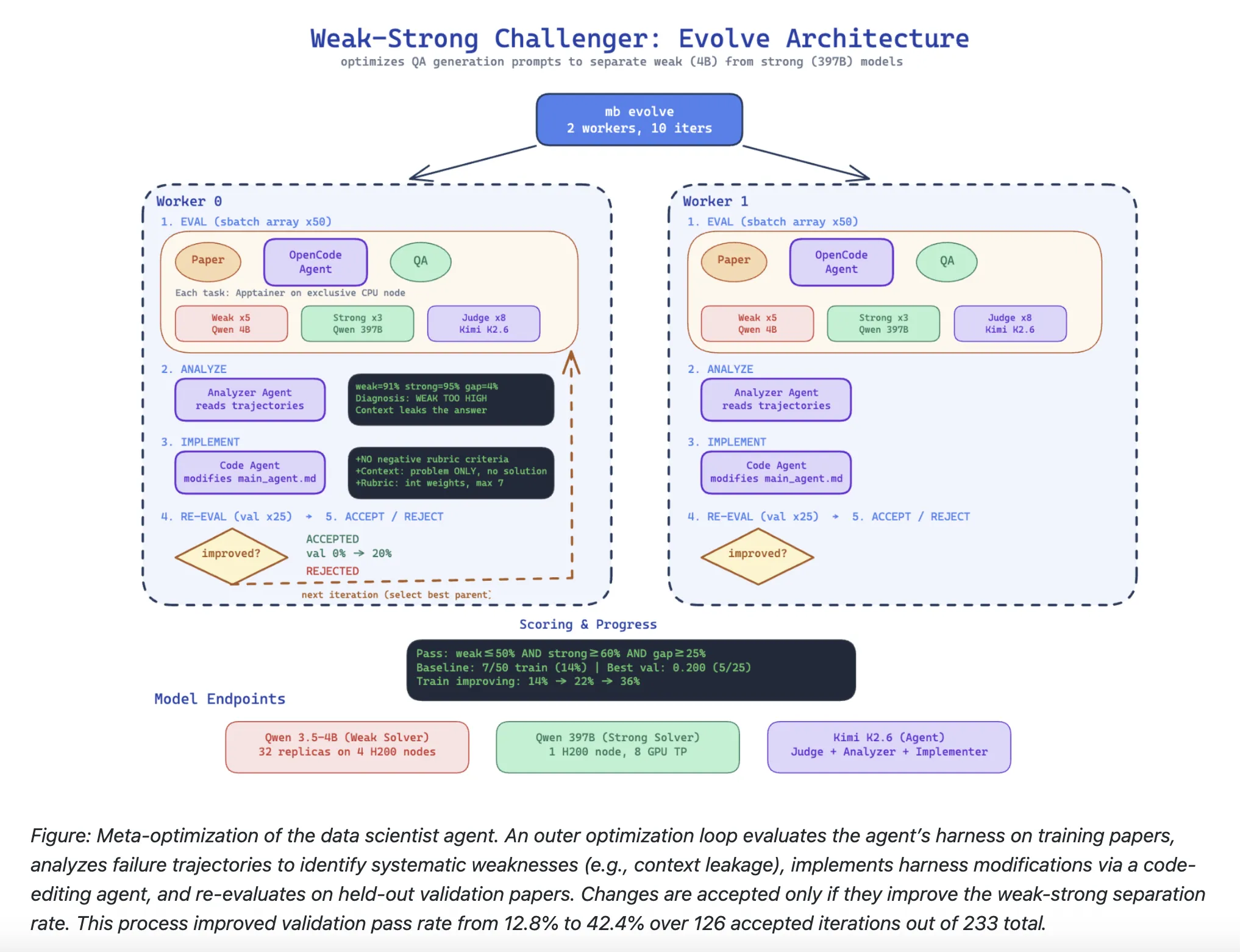

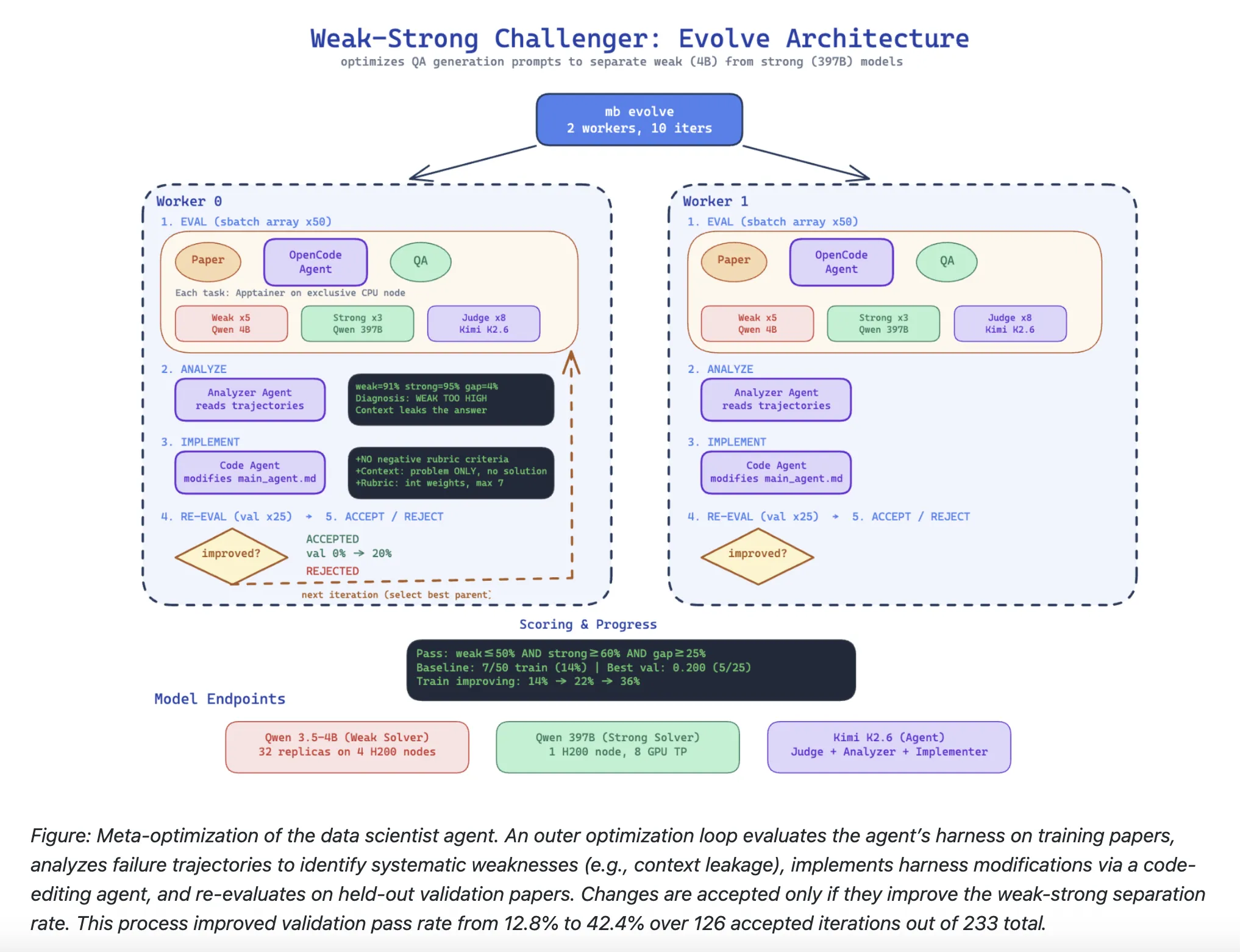

Automatic data goes a deeper level. Beyond the internal data creation loop, the framework supports Meta-optimization Same data scientist agent – using the same inner loop quality standards to optimize the outer loop agent tool (supporting agent code, claims, and evaluation logic).

Using the evolution-based optimization framework, the meta-optimizer performed 233 total iterations, of which 126 were accepted (a mutant belt is added to the set only if its validation score strictly exceeds the original score). The declarative optimizer Kimi-K2.6 was used as an analyzer-reading complete evaluation paths to diagnose systematic failure modes-and an executor, which modified the proxy tool via a code-editing proxy. The setup used 50 training sheets and 25 validation sheets.

Starting with a basic belt that achieves a 12.8% verification success rate, gradually discover the induction optimizer Four automatic key strap improvements:

- Enforce paper insight: Questions should test paper-specific knowledge, not general ML/ML knowledge. A self-test was given: “If the person doing the solution can answer correctly without reading this particular sheet, the question is very easy.”

- Prevent context leaks: Strict rules that require the context to describe only the problem domain and setting, and never the solution proposed in the paper.

- Positive rating title only with a weight limit: The optimizer eliminated negatively weighted evaluation criteria altogether, finding that they historically missed and destroyed the results of robust models without improving discrimination. All criteria now use positive integer weights with a maximum of 7.

- Structured title format: Strict JSON format for evaluation criteria with integer weights, eliminating parsing errors that caused the evaluation to fail in previous iterations.

The progression from 12.8% to 42.4% validated success rate demonstrates that meta-optimization of data scientist agent instructions can significantly improve data quality without the need for manual harness engineering.

verify Technical details here. Also, feel free to follow us on twitter Don’t forget to join us 130k+ ml SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.

Do you need to partner with us to promote your GitHub Repo page, face hug page, product release, webinar, etc.? Contact us