Video based models can draw a beautiful frame. And they are still very bad at remembering it. Push the camera through a corridor in Wan 2.1 or CogVideoX and walls warp, objects shift, and details disappear – the proof of which is that these models fit 2D pixel correlations rather than simulating a coherent 3D scene.

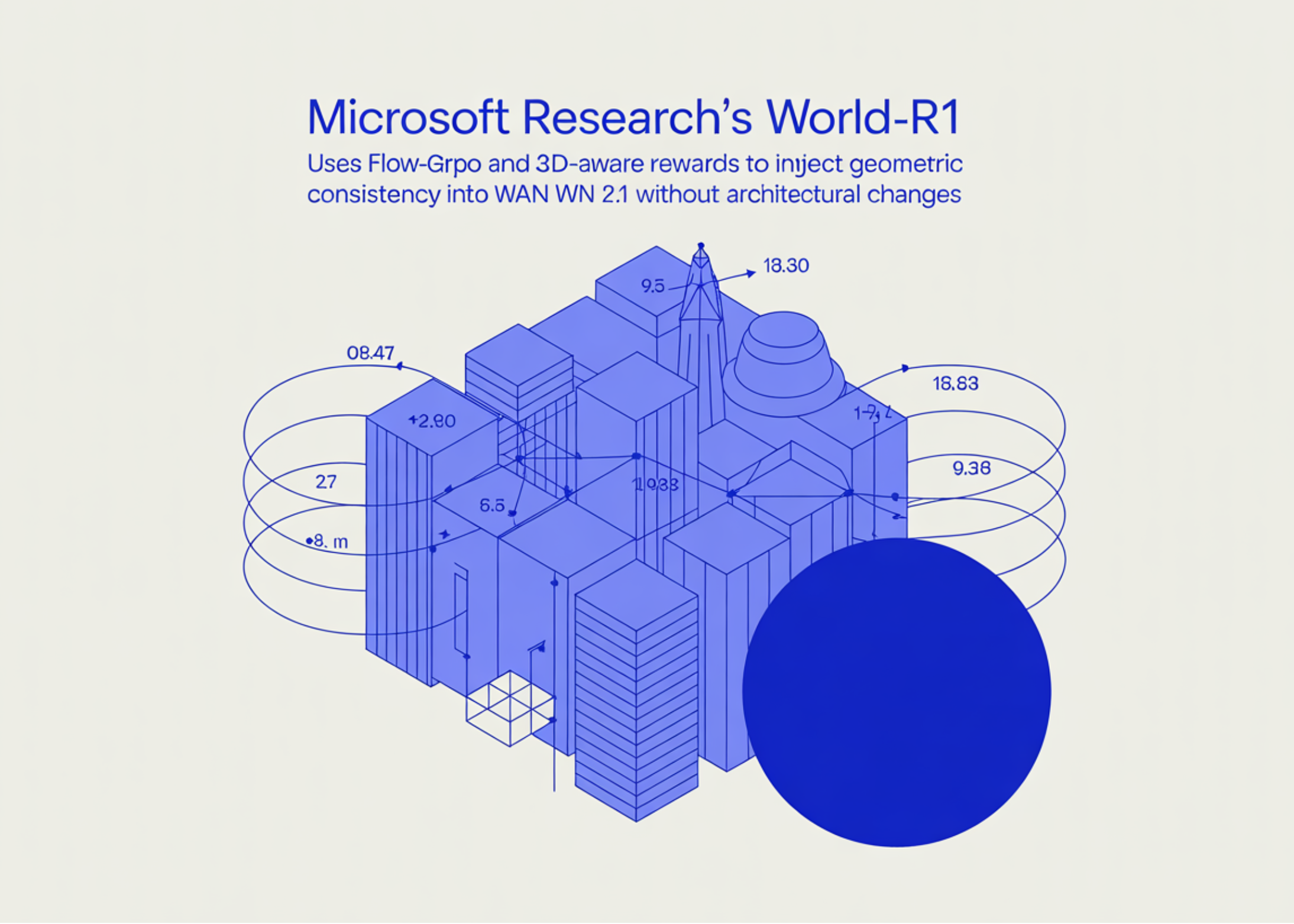

Presented by a team of researchers from Microsoft Research and Zhejiang University World-R1: A framework that aligns video creation to 3D constraints through reinforcement learning. The research team builds on a recent discovery that video-based models actually encode rich 3D geometric information internally. The task then is that Elicit That knowledge resides rather than being supervised with expensive 3D assets. World-R1 does this by post-training an existing text-to-video (T2V) model with reinforcement learning, using rewards derived from pre-trained underlying 3D models and a vision language critic. The basic structure is left unchanged and the inference cost does not change.

two World-R1 Variants released: World-R1-Small (Based on Wan2.1-T2V-1.3B) and World-R1-Large (Based on Wan2.1-T2V-14B).

Setup: Flow-GRPO on a flow-matched video model

World Uses-R1 GRPO flow is fastwhich is a modern modification of GRPO for flow conformal diffusion models. Flow-GRPO converts the deterministic sampled ODE into an inverse-time SDE such that the policy is sufficiently random to estimate the benefits, and then optimizes the truncated GRPO surrogate with KL regularization to a reference policy. The fast variant introduces SDE noise only at randomly selected intermediate steps to reduce launch cost.

Training is done at 832 x 480 resolution on 48 NVIDIA H200 GPUs for the small model and 96 H200 GPUs for the large model, with a GRPO cluster size of G=8 across 48 parallel clusters.

3D reward: analysis by synthesis

Interesting action happens in the bonus. For each generated video x, the system reconstructs a 3D Gaussian Splatting (3DGS) representation Φ.A Use Depth of anything 3 It recovers the estimated camera path. The 3D compound bonus is:

R3D = Sdead + Sre + Straj

- Sdead makes ΦA From a Meta show – The camera position takes away from the path of generation – and he asks Qwen3-VL To score the 0-9 reconstruction as an “expert in 3D vision,” with punishing floaters, ad artifacts, and texture stretch that look good head-on but collapse off-axis.

- Sre It reprojects the scene along Ê and compares it with x via 1 – LPIPS.

- Straj It measures the deviation between the desired path E and the recovered path Ê using L2 for translation and the geodesic distance for rotation, convolved with a negative exponential.

A general aesthetic term RGenis calculated as the average HPSv3 The result is added over the first K frames withGen = 1 to keep optical quality from breaking down under engineering stress.

Implicit camera adaptation via noise convolution

Instead of training a CameraCtrl-style adapter, the World-R1 follows instructions Go with the flow Model: The vector is parsed for motion symbols (push_in, orbit_left, pull_outetc.), a series of camera outliers are generated, displayed in 2D optical flow under the assumption of a parallel front view, and used to perform discrete noise transfer on the initial latent material. The transmitted noise maintains unity variance by normalizing the intensity trace, so the pre-propagation is not perturbed but the latent actually encodes the desired path. No new parameters, no architectural change.

Pure text dataset and periodic separation to keep the action alive

Training data is synthetic data Pure text dataset Nearly 3,000 prompts were created by Gemini, organized along a WorldScore camera path classification (intra-scene, inter-scene, composite, still) and across landscape, urban and architectural, micro and still life, fantasy and surrealism, and artistic styles. Using text only decouples 3D learning from the visual biases of any given video set.

Strict 3D rewards have a known failure mode: the model adapts to strict scenes and stops generating dynamic content. World-R1 mitigates this with Periodic separate training. Every 100 steps, t3D It was annotated and the model was adjusted using RGen Alone on nearly 500 routers A subset of dynamic data (Waterfalls, crowds, fire, transforming objects). Actually remove this stage Excites PSNR rebuilds but drops VBench AVG from 85.21 to 82.64 – exactly the reward breakout degradation that the research team is referring to.

Understand the results

On the 3DGS-based reconstruction protocol, it hits World-R1-Large 27.67 PSNR / 0.865 SSIM / 0.162 LPIPSversus 19.76 / 0.629 / 0.405 for Wan2.1-T2V-14B – PSNR gain of 7.91 dB. The World-R1-Small registers a gain of 10.23dB compared to its 1.3B workhorse. On independent reconstruction Multi-display consistency points (MVCS) Borrowed from GeoVideo, the World-R1-Large hits 0.993, ahead of all the 3D conditioning and camera control baselines tested (Voyager, ViewCrafter, FlashWorld, ReCamMaster, etc.).

Camera control is competitive with specialized methods: RotErr 1.21, TransErr 1.30, and CamMC 2.95 for the large model, outperforming CamCloneMaster and ReCamMaster despite not being a dedicated camera control architecture. VBench scores improve over the basic Wan 2.1 in aesthetic quality, imaging quality, motion smoothness, and subject consistency, with only a slight decline in background consistency.

Two robustness findings stand out for AI professionals. A Sizing the data set The sweep shows monotonic gains from 1K → 2K → 3K claims on both 3D consistency and VBench AVG, indicating that the recipe is data efficient and can be further scaled. Although the training is in short clips, World-R1-Large generalizes to… 121 frame generations, raising the PSNR from 18.32 to 26.32 on the Wan2.1-T2V-14B backbone. A double-blind user study of 25 participants reported win rates 92% for geometric consistency, 76% for camera control accuracy, and 86% for overall preference vs. One 2.1.

Key takeaways

- RL replaces architectural surgery to achieve 3D consistency. World-R1 post-trains Wan2.1 with Flow-GRPO-Fast instead of pinning on 3D modules or training on 3D supervised datasets. The basic structure and cost of inference have not changed.

- The reward is analysis by synthesis. Each generated video is upscaled to a 3D Gaussian Splatting representation via Depth Anything 3, and then scored on three axes: meta-rendering plausibility (according to Qwen3-VL), reconstruction accuracy (1 – LPIPS), and path alignment – combined with an HPSv3 aesthetic bonus to prevent quality collapse.

- Control of the camera comes from noise wrapping, not from new parameters. The motion codes in the vector are transformed into camera elements, displayed on a 2D optical flow, and used to distort the raw latent sound via discrete Go-with-the-Flow noise transfer. No CameraCtrl style adapter is required.

- Periodic discrete training prevents reward hacking. Every 100 steps, the 3D reward is suspended and the model is fine-tuned using the aesthetic reward alone over about 500 dynamic prompts. Removing this stage raises the PSNR but VBench tanks – the model collapses to a stable, easy-to-reconstruct output.

- The numbers are large and are holding up outside the pipeline. The World-R1-Large gains 7.91 dB PSNR over the Wan2.1-T2V-14B, generalizes to 121 frame videos, and improves the reconstruction-independent MVCS metric – with an overall preference win rate of 86% in a 25-participant blind user study.

verify Paper and symbols and Project page. Also, feel free to follow us on twitter Don’t forget to join us 130k+ ml SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.

Do you need to partner with us to promote your GitHub Repo page, face hug page, product release, webinar, etc.? Contact us